Introduction

You learned something an hour ago. Right now, about half of it is already gone. Not misplaced. Not buried under other thoughts. Gone. Dissolved back into the electrochemical noise of a hundred billion neurons firing without purpose. By tomorrow, roughly seventy percent of what you studied today will have vanished. By next week, ninety percent. This is not a metaphor. It is a measurement, first made in 1885 by a German psychologist sitting alone in his apartment, memorizing nonsense [1].

The forgetting curve explained in a single sentence: memory decays fastest in the first hours after learning, then slows into a long, shallow decline. But that sentence hides a century of arguments, three Nobel-adjacent discoveries, and a molecular war happening right now inside your hippocampus between proteins that want to remember and enzymes that want to forget.

This is the story of that curve. It begins with a man who turned himself into a laboratory rat. It travels through the mathematics of decay, the neuroscience of erasure, and the surprisingly recent discovery that forgetting is not a failure of the brain but one of its most important functions. Along the way, it will change how you think about studying, sleeping, and what it actually means to know something [2].

The Man Who Memorized Nonsense

Hermann Ebbinghaus was born in Barmen, Prussia, on January 24, 1850. He studied philosophy at Bonn, served briefly in the Franco-Prussian War, and spent years wandering through England and France before stumbling upon a used copy of Gustav Theodor Fechner's Elemente der Psychophysik at a secondhand bookstall in Paris. That book changed the course of psychology. Fechner had shown that physical sensations could be measured mathematically. Ebbinghaus asked a question nobody had asked before: could memory be measured the same way [1]?

The problem was obvious. If you test someone's memory using real words, their prior knowledge contaminates the result. The word "mother" is easier to remember than "arbitrage" because it carries emotional weight. Ebbinghaus needed material with zero meaning. So he invented it. He generated roughly 2,300 consonant-vowel-consonant nonsense syllables: DAX, BUP, ZOL, WID. Three letters. No meaning. Pure information.

Then he did something remarkable. He used himself as the only test subject. Between 1880 and 1885, sitting alone in his Berlin apartment, Ebbinghaus memorized lists of thirteen nonsense syllables. He read each list aloud at a fixed pace, again and again, until he could recite it perfectly twice in a row. Then he waited. Twenty minutes. One hour. Nine hours. A day. Two days. Six days. Thirty-one days. After each interval, he sat down and relearned the same list, counting how many repetitions it took to reach the same level of perfection [2].

The key measure was not what he remembered. It was how much effort he saved. If a list originally took ten repetitions to learn, and relearning it a day later took only three, the "savings" were seventy percent. This savings method was Ebbinghaus's masterstroke. It could detect traces of memory even when conscious recall had completely failed. A person might not remember a single syllable from a list. But if relearning that list takes fewer repetitions than learning a completely new one, something has been retained. Something invisible.

In 1885, he published his results in a slim volume titled Über das Gedächtnis, later translated as Memory: A Contribution to Experimental Psychology. The data, plotted on a graph, drew a curve that would become one of the most famous shapes in all of psychology. Savings dropped steeply in the first hour, then more gradually over days, then flattened into a long plateau. The shape was unmistakable. Memory did not erode at a steady rate. It crashed, then trickled.

What the Curve Actually Looks Like

Ebbinghaus fitted his savings data with a logarithmic function. In modern notation, the forgetting curve is often simplified to R = e-t/S, where R is retention, t is time, and S represents the relative strength of the memory. But this clean equation hides a messy scientific debate that took a century to resolve [3].

Is forgetting exponential? Or does it follow a power law? The difference matters. An exponential function drops fast and never truly reaches zero, but the rate of decline stays proportional to what remains. A power function also drops fast at first but decelerates more slowly over time, meaning memories at the tail end survive longer than an exponential model would predict.

In 1991, John Wixted and Ebbe Ebbesen at the University of California, San Diego, examined three different datasets: human word recall, human face recognition, and pigeon delayed matching-to-sample. They tested exponential, power, hyperbolic, logarithmic and other functions against the data. The result was decisive. The power function fit best [3]. Forgetting, at least when averaged across many items and many people, follows a power law: R = a · t-b.

Five years later, David Rubin and Amy Wenzel at Duke University pushed the analysis further. They compared 105 different mathematical functions against over 200 published retention datasets [4]. The conclusion was the same: power and logarithmic functions fit the data best. Pure exponential decay was rejected as a general law of forgetting.

But here is where the story gets interesting. In 1997, R. B. Anderson and Richard Tweney showed that if individual items decay exponentially but at different rates, the average across all items looks like a power function [5]. In other words, the power law might be a statistical ghost created by averaging. Each memory might decay exponentially on its own, but when you combine fast-decaying and slow-decaying memories in a single analysis, the aggregate shape bends into a power curve.

This averaging-artifact debate continued for years. Wixted and Ebbesen fired back, showing power fits even with geometric averaging and at the single-subject level [6]. Jaap Murre and Antonio Chessa at the University of Amsterdam developed mathematical models reconciling both views in 2011 [7]. The resolution, as is often the case in science, turned out to be "both are partially right." Individual items may decay exponentially. The population curve is a power law. And the shape of the curve you see depends on how you look at it.

130 Years Later, Someone Finally Checked

For 130 years, one of the most cited findings in psychology rested on data from a single person memorizing nonsense. Nobody had properly replicated it. That changed in 2015.

Jaap Murre and Joeri Dros at the University of Amsterdam designed a modern replication [2]. Dros served as the subject, spending over seventy hours memorizing lists of Dutch-conforming CVC nonsense syllables. He relearned them at intervals of twenty minutes, one hour, nine hours, one day, two days, and thirty-one days. The methodology closely followed Ebbinghaus's original design, using the savings method and controlled pacing.

The results were striking. The overall shape of the curve closely matched Ebbinghaus's 1885 data. Forgetting was steep in the first hour, decelerated over days, and flattened by the end of the month. But Murre and Dros found something Ebbinghaus had not reported: a small upward jump in savings at the twenty-four-hour mark. After one day, retention was slightly better than the curve predicted. The most likely explanation? Sleep. The subject had slept between the nine-hour and twenty-four-hour tests, and that sleep had consolidated some of the fragile memory traces.

This finding connected the forgetting curve directly to modern sleep and memory consolidation research, confirming that the shape of the curve is not fixed. It bends. Sleep bends it upward. And as we will see, other interventions can bend it even more.

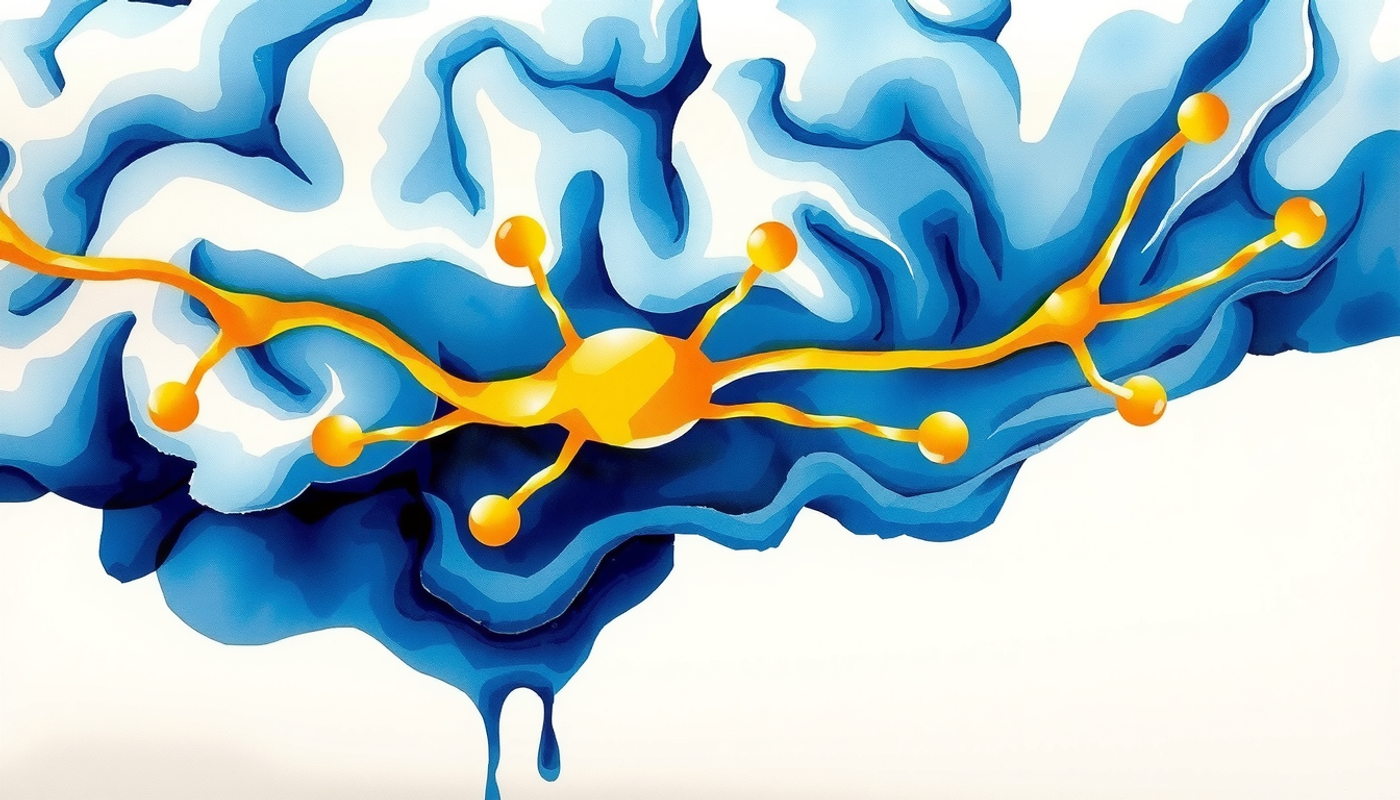

The Brain That Actively Forgets

For most of the twentieth century, forgetting was treated as passive. Memories decayed, like footprints in sand. The wind of time blew them away. But starting around 2010, neuroscience began to uncover something far more interesting. Your brain does not just let memories fade. It actively destroys them.

The first clue came from the synapse. When you learn something, the connections between neurons strengthen through a process called long-term potentiation, or LTP. Discovered by Tim Bliss and Terje Lømo in 1973 while recording from rabbit hippocampus, LTP is the cellular mechanism most neuroscientists consider the foundation of memory [8]. High-frequency stimulation of a neural pathway makes that pathway more responsive for hours, days, or longer. The synapse remembers.

But synapses also forget. And they forget through a specific molecular process. Oliver Hardt, Karim Nader, and Yue-Qiao Wang at McGill University proposed in 2014 that the decay of LTP depends on the regulated removal of AMPA receptors from the synapse [9]. AMPA receptors are the proteins that sit in the postsynaptic membrane and respond to the neurotransmitter glutamate. When more AMPA receptors are inserted into the membrane, the synapse strengthens. LTP. When they are pulled back inside the cell through a process called endocytosis, the synapse weakens. The memory fades.

In 2016, Szu-Han Wang and colleagues tested this directly [10]. They injected a peptide called GluA23Y into the dorsal hippocampus of rats. This peptide blocks the endocytosis of a specific type of AMPA receptor containing the GluA2 subunit. The result? The rats stopped forgetting. Object-location memories and contextual fear memories that would normally fade over days remained intact. Blocking the cellular machinery of forgetting preserved the memory.

Think about what this means. Forgetting is not entropy. It is not randomness. It is an active biological process, driven by specific proteins, targeting specific synapses. The brain chooses to forget.

But AMPA receptor trafficking is only one mechanism. The brain has an entire arsenal for erasing memories. And each weapon works differently.

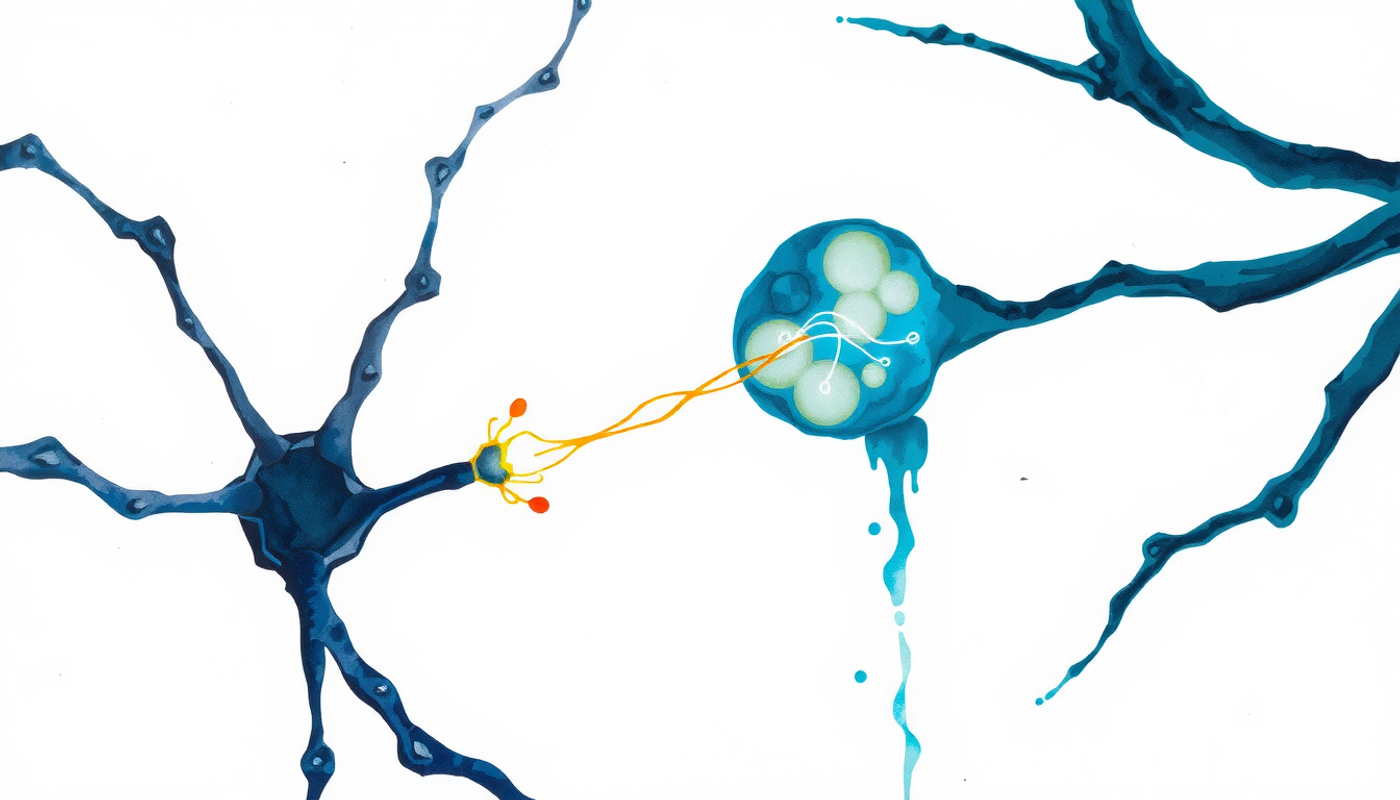

Dopamine: The Molecule That Erases

In 2012, a team led by Jacob Berry and Ronald Davis at the Scripps Research Institute in Florida made a surprising discovery using fruit flies. Dopamine, the neurotransmitter famous for pleasure, reward, and motivation, was also responsible for actively erasing memories [11].

Working with Drosophila melanogaster, Berry and colleagues showed that a specific set of dopamine neurons projecting to the mushroom body (the fly's memory center) continuously weakened stored olfactory memories. The erasure was not random. It operated through a specific receptor called DAMB and a downstream signaling cascade involving Rac1 GTPase and cofilin, proteins that remodel the actin cytoskeleton of synapses [12].

Ronald Davis and Yi Zhong at Tsinghua University later synthesized these findings into a concept they called intrinsic forgetting: a constitutive, ongoing dopaminergic drive that biases memories toward erasure unless they are actively stabilized through reinforcement or consolidation [13]. Forgetting, in this framework, is the default. Remembering is the exception. The brain has to actively fight to keep a memory alive.

In 2021, Berry and colleagues published an even more remarkable finding in Nature. A single pair of dopamine neurons in the fly brain could induce transient forgetting: temporary, reversible memory loss caused by brief dopamine release [14]. The memory was not destroyed. It was temporarily inaccessible. When the dopamine signal stopped, the memory returned.

What does this mean for the forgetting curve? Part of the rapid early decline may not be permanent erasure at all. Some of those "forgotten" memories may still be present, silenced by dopaminergic activity but retrievable under the right conditions.

The Immune Cells That Eat Your Memories

Perhaps the most surprising chapter in the forgetting story came in 2020, when Chao Wang and colleagues published a paper in Science that made neuroscientists rethink everything they knew about brain maintenance [15].

Microglia are the brain's immune cells. They make up about ten percent of all cells in the brain, and their traditional job description involves clearing debris, fighting infection, and mopping up after injury. But Wang's team at Zhejiang University discovered that microglia also eat synapses. Specifically, they engulf the synaptic components of engram cells, the neurons that physically store specific memories.

The mechanism is elegant and terrifying. The complement protein C1q tags certain synapses on engram cells, essentially painting a molecular "eat me" sign on them. Microglia recognize this tag through complement receptor CR3 and phagocytose (consume) the tagged synapses. When the researchers depleted microglia or blocked phagocytosis using the drug PLX3397, forgetting stopped. Contextual fear memories that would normally fade over weeks remained fully intact.

They confirmed the mechanism by overexpressing CD55, a complement inhibitor, specifically in engram cells. This prevented C1q from tagging the synapses for destruction. Again, forgetting was blocked. The immune system of the brain was actively, selectively pruning the physical substrate of specific memories.

This finding connected to earlier developmental work by Beth Stevens at Harvard, who had shown that complement-mediated pruning sculpts neural circuits during brain development [16]. The same machinery that refines the young brain continues operating in the adult brain. It just switches targets from developmental excess to old memories.

New Neurons, Lost Memories

There is one more forgetting mechanism that deserves its own section, because it inverts an assumption held sacred for decades. Adult neurogenesis, the birth of new neurons in the mature brain, was supposed to be purely beneficial. More neurons equals better memory. That was the story. Until 2014, when Paul Frankland, Sheena Josselyn, and their team at the Hospital for Sick Children in Toronto proved otherwise [17].

Working with mice, Akers, Martinez-Canabal, Restivo, and colleagues showed that increasing neurogenesis after learning caused forgetting. When they boosted the production of new neurons in the dentate gyrus of the hippocampus (through exercise or genetic manipulation), mice forgot contextual fear memories and water-maze memories faster than controls. The new neurons were remodeling the existing circuits, disrupting the patterns that stored the old memories.

Even more striking was the reverse experiment. Infant mice normally forget quickly. Infantile amnesia, the reason you cannot remember your second birthday, is one of the most robust phenomena in memory science. Frankland's team suppressed neurogenesis in infant mice. The result? The infant mice stopped forgetting. Memories that would normally vanish within days persisted for weeks. High neurogenesis drove infantile amnesia. Slowing it down preserved infant memories.

Follow-up work by Epp, Silva, Galea, and Bhatt in 2016 showed that neurogenesis-mediated forgetting serves an important function: it minimizes proactive interference [18]. Old memories stored in the hippocampus can interfere with the encoding of new ones. By clearing out old traces, new neurons make room for new learning. Forgetting, in this light, is not a bug. It is a feature that keeps the system flexible.

Why Forgetting Is Not a Flaw

If the brain has at least four separate mechanisms for erasing memories, forgetting cannot be an accident. In 2022, Tomas Ryan and Paul Frankland at Trinity College Dublin and the University of Toronto published a unifying framework in Nature Reviews Neuroscience [19]. They proposed that forgetting is best understood as adaptive engram cell plasticity: regulated changes in the accessibility of engram cells and their synapses, calibrated to environmental predictability and behavioral relevance.

In a stable environment where the same information is consistently useful, the brain stabilizes engrams and resists forgetting. In a changing environment where old information becomes unreliable, the brain accelerates forgetting to make room for updated representations. The forgetting curve is not a fixed property of biology. It is adaptive. It responds to the world.

This explains several puzzles. Why are emotional memories so durable? Because the amygdala signals the hippocampus that emotionally salient information is likely to be relevant in the future, triggering consolidation mechanisms that resist the forgetting machinery [20]. Why does sleep slow forgetting? Because during slow-wave sleep, the hippocampus replays recent experiences and transfers selected memories to neocortical storage where they are protected from hippocampal erasure mechanisms [21]. Why does testing yourself on material slow forgetting more effectively than re-reading it? Because retrieval practice strengthens the cue-target associations that make an engram accessible, essentially reinforcing the memory's resistance to decay [22].

The Theories That Tried to Explain It

Before the molecular machinery was understood, psychologists spent a century debating why the forgetting curve exists. Their theories were not wrong. They were incomplete.

Decay theory says memories fade with time, like ink on paper exposed to sunlight. Thorndike proposed a "law of disuse" in 1914. John Brown revived the idea for short-term memory in 1958. The theory fell out of favor because interference experiments could explain most forgetting without invoking decay. But Ricker, Vergauwe and Cowan argued in 2016 that pure time-based decay remains plausible for non-verbal short-term memories, where interference is minimal [23].

Interference theory says memories are not lost but obscured by competing memories. John McGeoch argued in 1932 that forgetting reflects interference, not time [24]. Benton Underwood demonstrated in 1957 that much of the forgetting seen in classic experiments was caused by proactive interference from previously learned lists [25]. Retroactive interference, where new learning disrupts old memories, and proactive interference, where old learning disrupts new memories, remain well-established phenomena.

Retrieval failure theory says forgotten memories still exist but cannot be accessed because the right cues are missing. Endel Tulving and Donald Thomson formalized this as the encoding specificity principle in 1973: a retrieval cue works only if the information it provides was encoded together with the target at the time of learning [26]. This explains context-dependent memory, the familiar experience of remembering something only when you return to the place where you learned it, first demonstrated by Godden and Baddeley with divers in 1975 [27].

Motivated forgetting says the brain can deliberately suppress unwanted memories. Michael Anderson and Collin Green showed in 2001 using the think/no-think paradigm that repeatedly suppressing retrieval of a memory reduces later recall [28]. Prefrontal cortex actively inhibits hippocampal retrieval, a mechanism Anderson and Simon Hanslmayr later connected to specific neural oscillatory patterns [29].

The modern view, informed by molecular neuroscience, is that all four theories capture real phenomena operating at different levels. Decay maps onto AMPA receptor endocytosis and neurogenesis. Interference maps onto overlapping engram circuits. Retrieval failure maps onto the state of cue-engram associations. And motivated forgetting maps onto prefrontal-hippocampal suppression. The forgetting curve is not produced by a single mechanism. It is the aggregate of multiple overlapping processes.

What Bends the Curve

The forgetting curve is not destiny. It can be bent, flattened, and in some cases almost eliminated. The interventions that work best share a common feature: they force the brain to actively process the memory rather than passively receive it.

Spaced practice is the most powerful tool. Ebbinghaus himself noticed it in 1885: "with any considerable number of repetitions, a suitable distribution of them over a space of time is decidedly more advantageous than massing them at a single time." In 2006, Nicholas Cepeda and colleagues published a massive meta-analysis of 317 experiments confirming that distributing study sessions across time produces substantially better long-term retention than cramming [30]. A follow-up study in 2008 mapped the optimal spacing gap: roughly ten to twenty percent of the desired retention interval [31]. If you need to remember something for a month, review it after three to six days. If you need to remember it for a year, review it after a month.

Retrieval practice, also called the testing effect, is almost as powerful. Henry Roediger and Jeffrey Karpicke demonstrated in 2006 that testing yourself on material produces dramatically better long-term retention than re-reading it, even when the re-reading group spends more total time studying [22]. The act of retrieving a memory strengthens it. Reading does not.

Interleaving, mixing different types of problems or topics during practice rather than doing them in blocks, nearly doubled test performance in a study by Taylor and Rohrer in 2010 [32]. Robert Bjork at UCLA coined the term desirable difficulties for these counterintuitive strategies that slow learning down in the short term but dramatically improve retention in the long term [33].

Depth of processing also matters. Fergus Craik and Robert Lockhart proposed in 1972 that memories encoded at a deeper semantic level are more resistant to forgetting than those encoded superficially [34]. Asking "what does this word mean?" produces better retention than asking "is this word written in capital letters?" The deeper the processing, the flatter the curve.

And then there is sleep. Susanne Diekelmann and Jan Born at the University of Tübingen published a landmark review in 2010 showing that slow-wave sleep replays hippocampal memories and transfers them to neocortical storage [21]. This is why Murre and Dros found the upward bump at twenty-four hours. Sleep does not just protect memories from interference. It actively consolidates them, moving them from fragile hippocampal traces to more durable neocortical representations [35].

When Forgetting Goes Wrong

There is a dark side to this story. When the forgetting machinery malfunctions, the consequences can be devastating.

Chronic stress floods the hippocampus with cortisol, which impairs retrieval, suppresses neurogenesis, and causes dendritic atrophy [36]. People under sustained stress forget faster and remember worse. The forgetting curve steepens under cortisol.

Aging accelerates long-term forgetting. A 2020 study by Weston and colleagues found that accelerated long-term forgetting in healthy older adults predicted cognitive decline over the following year [37]. The forgetting curve becomes steeper with age, in part because the hippocampus shrinks, neurogenesis declines, and the molecular machinery of consolidation becomes less efficient.

Post-traumatic stress disorder presents the opposite problem. Traumatic memories resist forgetting. The amygdala tags them with such intense emotional significance that the normal forgetting machinery cannot erase them. The forgetting curve for trauma is nearly flat. The memory replays, unbidden, with the vividness of the original experience. Treatments like exposure therapy essentially try to build new, competing memories that gradually overwrite the traumatic engram, leveraging interference theory at the therapeutic level [29].

Even something as mundane as technology may be reshaping the curve. In 2011, Betsy Sparrow, Jenny Liu, and Daniel Wegner at Columbia University demonstrated the "Google effect": when people expect to have future access to information, they encode the content less deeply but remember where to find it [38]. The forgetting curve for content steepens, while the forgetting curve for source location flattens. The brain adapts its forgetting patterns to the information environment.

From Curves to Algorithms

The forgetting curve has practical descendants. Spaced repetition algorithms are essentially mathematical models of the curve, designed to schedule reviews at the optimal moment: just before a memory would be forgotten.

Paul Pimsleur introduced graduated-interval recall in 1967 [39]. Sebastian Leitner described his cardboard box system in 1972. Piotr Wozniak created the SM-2 algorithm in 1990, which formalized the spacing of reviews based on self-rated difficulty and inspired the open-source scheduling engines that now serve millions of learners worldwide [40].

The most recent development is FSRS (Free Spaced Repetition Scheduler), published by Jarrett Ye at the ACM KDD conference in 2022 and integrated into the popular open-source flashcard platform since 2023. FSRS replaces the heuristic rules of SM-2 with parameters learned from actual review logs, modeling memory as three variables: retrievability (how likely you are to recall right now), stability (how resistant the memory is to decay), and difficulty (how hard the item inherently is). The result is a personalized forgetting curve for every item in every user's collection.

The computational approach pioneered by John Anderson's ACT-R cognitive architecture models memory activation as a power function that decays with time but receives a boost with each retrieval [41]. Pavlik and Anderson extended this in 2005 to show how the spacing effect emerges naturally from the model [42]. Each study episode creates a trace whose decay rate depends on activation at the time of study. Spaced study creates traces that decay more slowly because they are encoded when activation is lower, producing a more durable memory.

These algorithms are translations of the forgetting curve into engineering. They do not change the biology. But they exploit the biology intelligently, scheduling each review at the point where the forgetting curve is about to push retention below a useful threshold.

What We Still Do Not Know

The forgetting curve is one of the best-replicated findings in all of psychology. But significant questions remain.

First, the exact mathematical form of the curve is still debated. Power and logarithmic functions provide the best fits at the group level, but whether this reflects the true shape of individual-item decay or emerges from averaging remains unresolved [7].

Second, the relationship between the four molecular mechanisms (AMPA receptor endocytosis, dopaminergic intrinsic forgetting, neurogenesis-mediated remodeling, and microglial pruning) is unclear. Do they operate in sequence, in parallel, or on different time scales? How do they interact? A unified cellular model of forgetting does not yet exist [19].

Third, most of the molecular evidence comes from rodents and fruit flies. Adult neurogenesis in the human hippocampus remains contentious: Boldrini and colleagues reported robust neurogenesis in 2018 [43], but Sorrells and colleagues found it virtually absent in the same year [44]. If human hippocampal neurogenesis is minimal, the neurogenesis-mediated forgetting mechanism may contribute less to the human forgetting curve than animal studies suggest.

Fourth, almost all laboratory studies of the forgetting curve use verbal materials, lists, or simple associations. Autobiographical memories, procedural skills, and emotional memories follow different curves. Harry Bahrick showed in 1984 that foreign-language vocabulary retained through spaced practice can persist for decades with minimal further review [45]. The forgetting curve for deeply practiced skills may be nearly flat.

Finally, many popular sources cite specific percentages as though they were universal: "you forget 50% in one hour, 70% in 24 hours, 90% in a week." These numbers come from Ebbinghaus's single-subject data with nonsense syllables. They are not universal laws. The actual shape and steepness of the curve depends on the material, the learner, the depth of encoding, the presence of sleep, and dozens of other variables. Treating them as fixed constants is a misuse of the original research.

Conclusion

Hermann Ebbinghaus sat alone in his Berlin apartment in the early 1880s, memorizing syllables that meant nothing. He had no funding, no laboratory, no research team. Just himself, a metronome, and an obsessive commitment to measurement. What he found, plotted on a simple graph, turned out to be one of the most fundamental laws of the mind: memories decay fastest in the first hours, then taper into a long, slow decline.

For over a century, that curve was treated as an unfortunate fact of biology. A design flaw. A leak in the system. But the past two decades of neuroscience have revealed something far more interesting. The brain does not forget by accident. It forgets on purpose. Enzymes pull receptors from synapses. Dopamine neurons actively erase traces. New neurons remodel old circuits. Immune cells consume the physical connections that held memories together. Each mechanism serves a function: preventing overload, reducing interference, maintaining flexibility.

The forgetting curve is not a flaw. It is a calibration. And because it is biological, it can be influenced. Spacing your study. Testing yourself instead of re-reading. Sleeping after learning. Exercising. These are not study tips. They are interventions that directly interact with the molecular machinery described in this article. They bend the curve not through willpower, but through biology.

Ebbinghaus could not have known any of this. He could not have imagined microglia eating synapses, or dopamine neurons silencing engrams, or infant mice remembering things they were supposed to forget. But the curve he drew in 1885, alone in that apartment, with nothing but nonsense and a clock, turned out to be the beginning of a story that is still being written.

Frequently Asked Questions

What is the forgetting curve?

The forgetting curve is a graph showing how memory retention declines over time when no effort is made to review the learned material. First measured by Hermann Ebbinghaus in 1885, it shows that most forgetting happens within the first few hours, then slows down gradually over days and weeks.

How quickly do we forget new information?

Research shows that without review, people typically retain about 50 percent of new information after one hour. After 24 hours, retention drops to roughly 30 percent. After a week, only about 10 percent remains. These numbers vary depending on the material and the individual learner.

What is the best way to fight the forgetting curve?

Spaced repetition and retrieval practice are the two most effective strategies. Spacing study sessions over time and actively testing yourself on material produce substantially better long-term retention than passive re-reading or cramming. Sleep after learning also significantly improves consolidation.

Does the brain forget on purpose?

Yes. Modern neuroscience has identified at least four active forgetting mechanisms including AMPA receptor removal from synapses, dopamine-driven erasure, neurogenesis-mediated circuit remodeling, and microglial synaptic pruning. Forgetting helps prevent overload, reduce interference between memories, and maintain cognitive flexibility.

Has the Ebbinghaus forgetting curve been replicated?

Yes. In 2015, Jaap Murre and Joeri Dros at the University of Amsterdam replicated the forgetting curve using methods closely matching those of Ebbinghaus. Their results closely paralleled the original 1885 data, confirming the general shape of the curve while also revealing the beneficial effect of overnight sleep on retention.