Introduction

A patient arrives in the emergency department at 2 a.m. Chest pain. Shortness of breath. Mild fever. Elevated troponin. The resident on call has been awake for twenty-two hours. She holds three competing diagnoses in her mind: acute coronary syndrome, pulmonary embolism, pericarditis. Then the nurse interrupts with a question about a different patient. Then the pager buzzes. Then the lab calls with another result. Somewhere in the middle of those interruptions, the differential narrows to one diagnosis. But is it the right one?

This question sits at the intersection of two fields that rarely talk to each other: cognitive neuroscience and clinical medicine. On one side, decades of laboratory research have mapped the architecture of working memory, the brain's small, fragile mental workspace that holds and manipulates information in real time [1]. On the other side, a growing body of patient safety research has documented the staggering frequency of diagnostic errors, with roughly 12 million American adults experiencing a misdiagnosis every year [2]. The connection between these two findings is direct. Every clinical decision runs through a cognitive bottleneck that holds about four items for about twenty seconds. When that bottleneck is overwhelmed by interruptions, fatigue, or stress, errors follow [3].

This is the story of that bottleneck. How it was discovered, how it works in the brain, why it fails in the clinic, and what can be done about it.

The Four-Item Ceiling

In 1956, a Harvard psychologist named George Miller published one of the most famous papers in the history of cognitive science. Its title was playful: "The Magical Number Seven, Plus or Minus Two" [4]. Miller had noticed something odd. In experiment after experiment, across different laboratories and different sensory modalities, people seemed to hit a wall at about seven items when asked to hold information in immediate memory. Seven digits. Seven tones. Seven words. The number kept appearing.

But Miller's number was misleading. He knew it, too. He was careful to note that "there is nothing magical about the number seven." What mattered was not the raw number of items but the size of each item. People could hold seven single digits, or seven words, or seven chess positions. The trick was a process Miller called "chunking," grouping small pieces of information into larger meaningful units. A phone number like 4-1-5-5-5-5-1-2-3-4 is ten items. Grouped as 415-555-1234, it becomes three chunks. Chunking effectively multiplied memory capacity without changing the underlying biology.

It took forty-five years for someone to measure the true biological limit. In 2001, Nelson Cowan at the University of Missouri published a target article in Behavioral and Brain Sciences titled "The Magical Number 4 in Short-Term Memory" [5]. Cowan's argument was rigorous. When you prevent people from chunking, when you prevent them from rehearsing, when you strip away every cognitive trick, the real capacity of the central memory store is three to five items. Not seven. Three to five. A follow-up paper in 2010 called this "a central memory store limited to 3 to 5 meaningful items in young adults" [6].

For a clinician, this number is sobering. It means that any unaided differential diagnosis beyond four or five competing hypotheses is, neurologically, an exercise in self-deception. The brain is not holding all of them simultaneously. It is cycling through them, dropping some, picking others back up, and losing information at every transition.

Baddeley's Blueprint

Knowing the capacity limit was one thing. Understanding the architecture was another.

In 1974, Alan Baddeley and Graham Hitch, two British psychologists at the University of Stirling, published a chapter that redrew the map of short-term memory [1]. Before them, the dominant model was Atkinson and Shiffrin's "multi-store" framework from 1968, which treated short-term memory as a single passive box between the senses and long-term storage. Information came in, sat in the box for a while, and either moved to long-term memory or vanished. Baddeley and Hitch thought this was too simple. Their experiments showed that people could hold a string of digits in memory while simultaneously performing reasoning tasks. If short-term memory were a single box, both tasks should compete for the same space and performance should collapse. It did not.

Their solution was to split the single box into a working system with multiple parts. The phonological loop, a brief auditory store lasting about 1 to 2 seconds paired with an articulatory rehearsal mechanism. This is the "inner voice" that repeats the patient's chief complaint while you examine them. The visuospatial sketchpad, a separate store for visual and spatial information. This is the mental image of the CT scan you just reviewed. The central executive, a limited-capacity attention controller that directs traffic between the other components. And in 2000, Baddeley added a fourth piece: the episodic buffer, a system that binds information from the other components with long-term memory into coherent episodes [7].

In January 2025, Hitch, Allen, and Baddeley published a fifty-year retrospective in the Quarterly Journal of Experimental Psychology. Their verdict: the model's "longevity reflects not only the importance of working memory in cognition but also the usefulness of a simple, robust framework" [8].

What does this mean for a clinician at the bedside? Consider a physician examining a patient with acute abdominal pain. The phonological loop holds the verbal report: "sharp pain, right lower quadrant, started six hours ago." The visuospatial sketchpad holds the mental image of McBurney's point. The central executive is deciding whether to order a CT scan or proceed to surgical consult. And the episodic buffer is integrating all of this with the memory of a similar case from last month that turned out to be a ruptured ovarian cyst, not appendicitis. All of this runs on a workspace that holds four items for twenty seconds.

The Prefrontal Cortex: Where Diagnoses Are Made and Lost

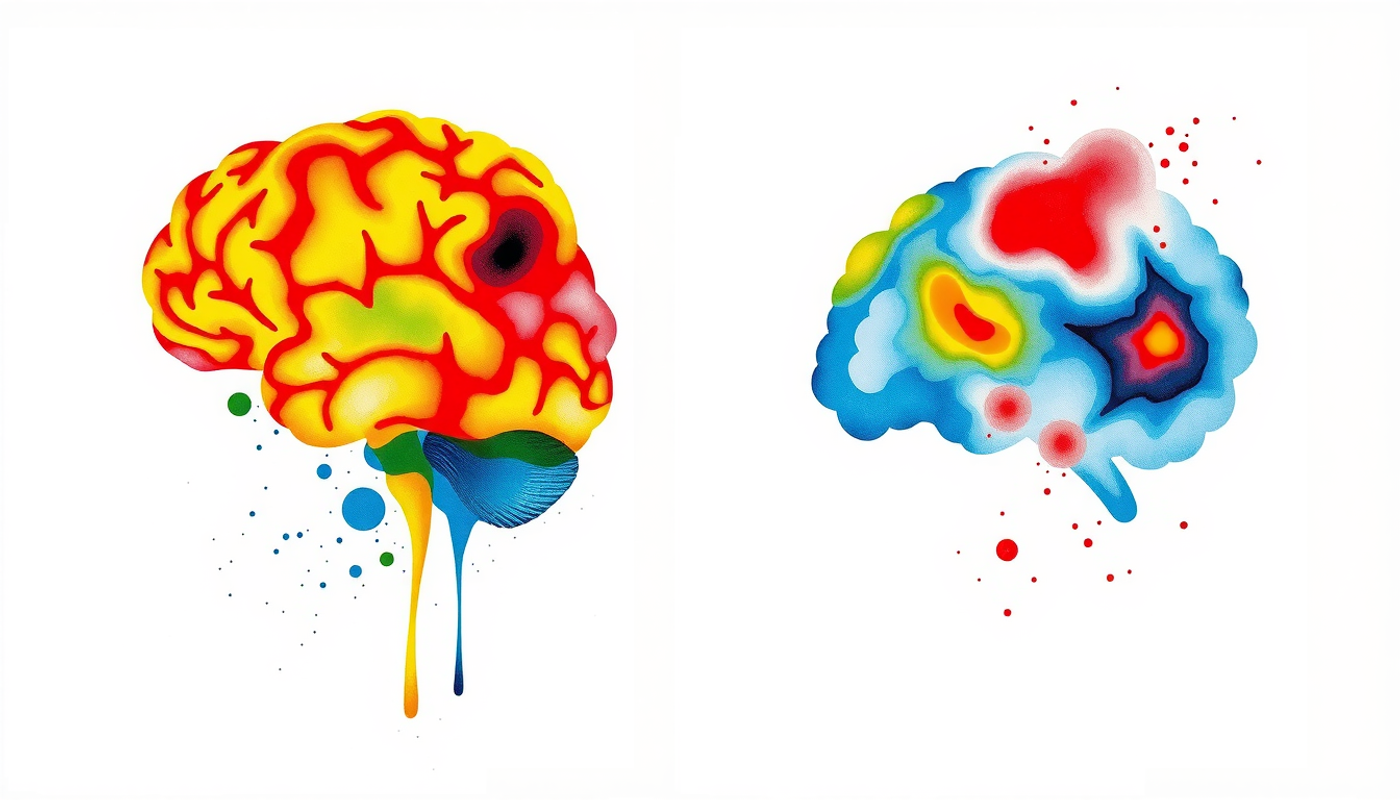

The architecture described by Baddeley is a psychological model. The physical machinery lives in the brain's prefrontal cortex, specifically the dorsolateral region, a strip of neural tissue behind the forehead that is the last part of the brain to mature and the first to degrade under stress.

In 1989, Shintaro Funahashi, Charles Bruce, and Patricia Goldman-Rakic at Yale published a landmark paper in the Journal of Neurophysiology [9]. They recorded from individual neurons in the dorsolateral prefrontal cortex (dlPFC) of monkeys performing a delayed-response task. The monkey saw a stimulus, the stimulus disappeared, and after a delay the monkey had to remember where it was. During the delay, specific prefrontal neurons kept firing, maintaining the memory of the stimulus location in the absence of any external cue. These "delay cells" were the physical substrate of working memory, neurons that held information online through persistent activity.

The story gets more interesting with neurochemistry. In 2007, Sridharan Vijayraghavan and colleagues in Amy Arnsten's laboratory at Yale demonstrated something that every sleep-deprived resident should know [10]. They applied dopamine directly to prefrontal neurons in behaving monkeys while the animals performed a working memory task. The result was an inverted-U dose-response curve. Too little dopamine and the neurons could not sustain their firing. Too much dopamine and the neurons became noisy and unfocused. Only at an intermediate level did working memory function optimally. A 2022 meta-analysis in NeuroImage confirmed this inverted-U relationship in humans [11].

The clinical translation is immediate. The under-aroused post-call resident and the over-aroused trauma chief are sitting at opposite ends of the same dopamine curve. Both have impaired prefrontal function. Both are more likely to miss critical diagnostic information. The optimal zone is narrow, and most clinical environments push physicians away from it.

Two Systems, One Brain, Countless Errors

How do physicians actually think when diagnosing a patient? The best available answer comes from dual process theory, a framework imported from cognitive psychology into medicine by Pat Croskerry at Dalhousie University.

Croskerry's 2009 paper in Advances in Health Sciences Education laid out the model [12]. System 1 thinking is fast, automatic, and pattern-based. It requires almost no working memory. An experienced cardiologist who sees a patient with sudden tearing chest pain radiating to the back and a widened mediastinum does not deliberate. The pattern triggers an instant recognition: aortic dissection. System 2 thinking is slow, analytic, and effortful. It loads heavily on working memory. A second-year medical student faced with the same patient must systematically walk through the differential: Could this be an MI? A PE? Esophageal rupture? Pericarditis? Each possibility must be held in the workspace, compared against the evidence, and evaluated.

Both systems fail. But they fail in different ways.

System 1 fails through cognitive biases. Anchoring: locking onto the first diagnosis and failing to adjust. Premature closure: accepting a diagnosis before it is fully verified. Availability bias: overestimating the probability of a diagnosis because a recent case comes easily to mind. Croskerry catalogued more than thirty of these biases in a 2003 Academic Medicine paper [13]. A Japanese study of 130 physicians found an average of 3.08 cognitive biases per diagnostic error, with anchoring appearing in 60% of cases, premature closure in 58.5%, and availability bias in 46.2% [14].

System 2 fails when working memory is overwhelmed. Norman, Monteiro, Sherbino, and colleagues published the definitive synthesis in Academic Medicine in 2017 [15]. Their conclusion was blunt: "Errors in Type 2 reasoning may result from the limited capacity of working memory, which constrains computational processes." And their most provocative finding was this: "Educational strategies directed at the recognition of biases are ineffective in reducing errors. Conversely, strategies focused on the reorganization of knowledge to reduce errors have small but consistent benefits."

In other words, teaching doctors about their biases does not help. Teaching them better medical knowledge does.

The Expert's Secret Weapon

If working memory is so small, how do experienced clinicians manage the enormous complexity of real patients? The answer lies in a concept called illness scripts.

Henk Schmidt and Rolf Rikers at Erasmus University Rotterdam spent decades studying how medical expertise develops. Their work, published in Medical Education, describes a progression that transforms working memory from a bottleneck into a gateway [16]. Early in training, medical students accumulate facts. Lots of facts. Individual symptoms, lab values, pathophysiology steps, drug mechanisms. Each fact occupies a separate slot in working memory. A first-year student reasoning about chest pain must hold ten or fifteen separate pieces of information, far exceeding the four-item limit.

As expertise develops, those facts get compressed. Related pieces of information merge into schema, organized knowledge structures that can be retrieved as a single unit. And the most refined form of schema is the illness script: a pre-compiled mental package containing the predisposing conditions, the pathophysiological insult, and the clinical consequences of a disease. Lubarsky and colleagues put the educational implication directly in a 2015 paper: "Studies of expertise development in medicine have consistently shown that those considered to be 'experts' are distinguished not by their superior problem-solving skills, nor by their enhanced capacity for memory retrieval, but by the content and organization of their knowledge base" [17].

The cardiologist does not hold ten separate features when seeing an aortic dissection. She holds one chunk: "aortic dissection presentation." Same data. One slot instead of ten. This is chunking applied to clinical reasoning, and it is the only known way to functionally increase working memory capacity.

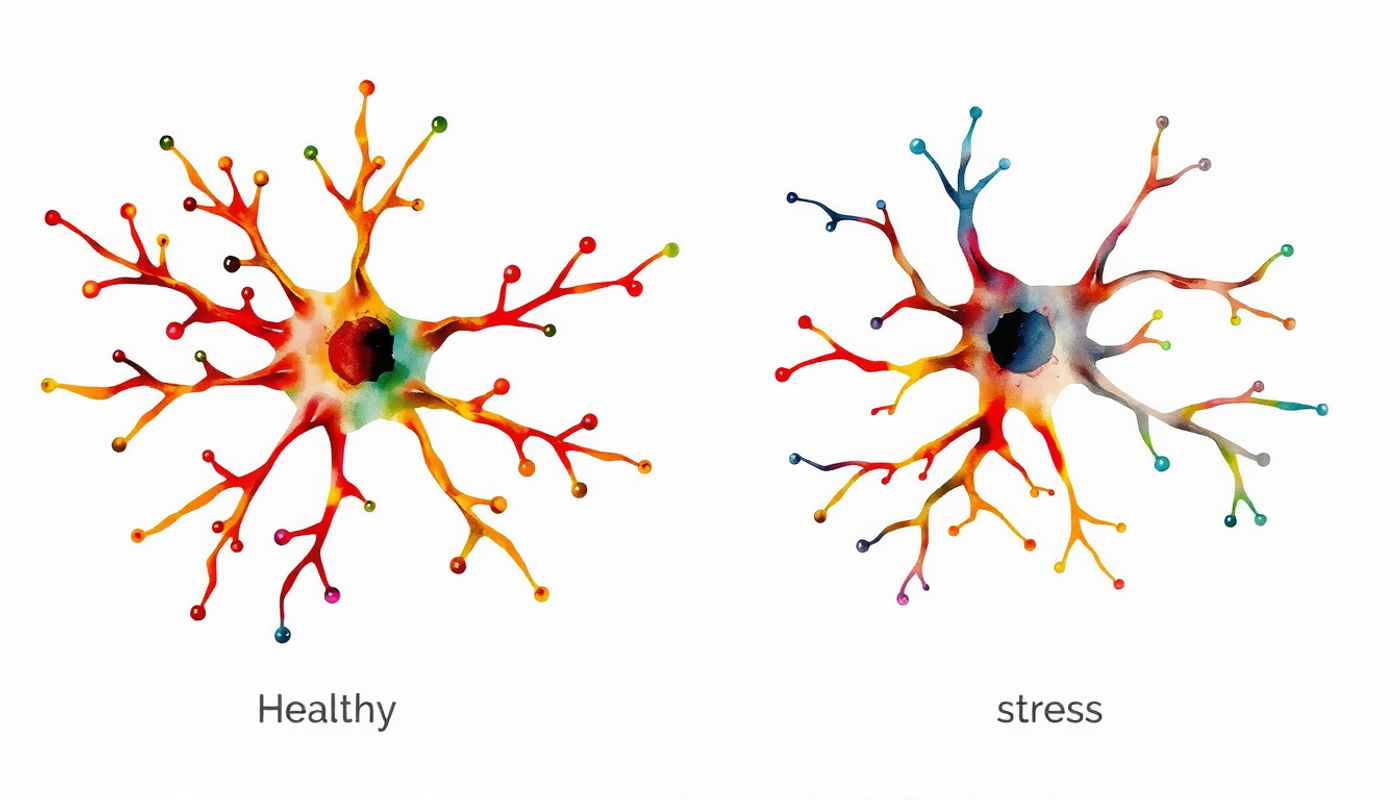

Paul Hruska and colleagues at the University of Calgary provided the neural evidence. In 2016, they used fMRI to compare second-year medical students with practicing gastroenterologists while both groups reasoned through clinical cases [18]. The finding was striking. Novices showed greater activation in the prefrontal cortex for both easy and hard cases, meaning they were relying more heavily on working memory. Experts showed reduced prefrontal activation and increased activity in regions associated with pattern recognition and memory retrieval. The neural efficiency hypothesis, the idea that experts do more with less prefrontal effort, was confirmed directly in clinical reasoning.

When the Workspace Breaks

The machinery is fragile. Three forces routinely break it in clinical settings: interruptions, sleep deprivation, and stress.

Johanna Westbrook and colleagues at Macquarie University conducted the most revealing study of interruptions in clinical practice. Published in BMJ Quality & Safety in 2018, the study followed 36 emergency physicians for 120 hours, recording every task, every interruption, every error [19]. The numbers were stark.

Physicians experienced 7.9 interruptions per hour on average. While actively prescribing medications, the rate rose to 9.4 interruptions per hour. When interrupted, the error rate increased 2.82-fold. When multitasking, it increased 1.86-fold. And physicians who reported below-average sleep showed a greater than 15-fold increase in clinical error rate. The study also measured each physician's working memory capacity using the Operation Span Task. Higher capacity provided some protection against the effects of interruptions, but no physician was immune.

Sleep deprivation attacks working memory directly. A study of 39 internal medicine residents tracked working memory capacity across the call cycle using the OSPAN test [20]. Mean working memory scores dropped on post-call days and never fully recovered during the call month. Even on the day before the next call, scores remained below baseline. The workspace was operating at reduced capacity throughout the entire rotation.

The scale of the resulting harm is enormous. Trockel and colleagues surveyed 7,538 physicians nationally in a 2020 JAMA Network Open study [21]. Moderate, high, and very high sleep-related impairment were associated with 53%, 96%, and 97% greater odds of self-reported clinically significant medical error, respectively. These associations persisted after adjusting for burnout, specialty, and hours worked.

Stress and the Offline Brain

Amy Arnsten at Yale School of Medicine has spent three decades studying what stress does to the prefrontal cortex. Her 2009 review in Nature Reviews Neuroscience is the foundational text [22].

The story is straightforward and alarming. Even mild acute uncontrollable stress causes a rapid loss of prefrontal cognitive abilities. The mechanism involves norepinephrine and dopamine flooding the prefrontal cortex. At moderate levels, these neurotransmitters help working memory function. But under stress, concentrations spike. Alpha-1 adrenergic and D1 dopaminergic overstimulation activate a signaling cascade (cAMP-PKA) that opens ion channels in prefrontal neurons, shunting excitatory currents and effectively disconnecting the prefrontal cortex from the rest of the brain.

Control shifts. The reflective prefrontal system goes offline. The reactive amygdala-striatum system takes over. Pattern recognition and habitual responses dominate. Analytical reasoning disappears. Arnsten's research at Yale has shown that this shift happens rapidly and can persist for hours after the stressor resolves [23].

Chronic stress is worse. Sustained cortisol exposure causes dendritic atrophy and spine loss in prefrontal pyramidal neurons. The physical structure of the working memory machinery degrades. Qin, Hermans, van Marle, and colleagues showed in their 2009 Frontiers in Human Neuroscience paper that stress-induced hormone-neurotransmitter interactions specifically impair prefrontal function while amplifying amygdala reactivity [24].

Consider the emergency physician at hour eighteen of a shift. A trauma alert comes in. Cortisol surges. Norepinephrine floods the prefrontal cortex. The physician's working memory narrows. Pattern recognition takes over. If the pattern fits, the diagnosis is fast and accurate. If it does not, if the patient has an unusual presentation that requires careful analytical reasoning, the physician is now operating without the cognitive tool needed to detect it. This is not a failure of character. It is a failure of biology.

Twelve Million Errors a Year

How large is the problem? The 2015 National Academies report Improving Diagnosis in Health Care established the numbers [3]. Five percent of U.S. adults who seek outpatient care each year experience a diagnostic error. Postmortem studies show that diagnostic errors contribute to approximately 10% of patient deaths. Medical record reviews suggest that diagnostic errors account for 6 to 17% of hospital adverse events.

Singh, Meyer, and Thomas translated the 5% rate into absolute terms in their 2014 BMJ Quality & Safety paper [2]. Roughly 12 million American adults experience a diagnostic error every year. About half of those errors could potentially cause harm.

Newman-Toker and colleagues sharpened the picture in 2021 with their analysis of the diagnostic "Big Three," vascular events, infections, and cancers [25]. Disease-specific error rates ranged from 2.2% to as high as 62.1% for spinal and intracranial abscesses. A 2019 Medscape poll of 633 physicians found that emergency medicine doctors were the highest-reporting specialty for daily diagnostic errors at 26% [26].

Not all of these errors originate in working memory failures. Knowledge gaps, system failures, communication breakdowns, and equipment malfunctions all contribute. But cognitive factors, the category that includes working memory overload, account for the largest share. Graber, Franklin, and Gordon's landmark 2005 study found that cognitive factors contributed to 74% of diagnostic errors, and most errors involved more than one cognitive factor simultaneously [27].

The Checklist Revolution

If the problem is a biological workspace that holds four items, one obvious solution is to move items off the workspace and onto paper.

The defining proof came in 2009. Atul Gawande's team published the WHO Surgical Safety Checklist trial in the New England Journal of Medicine [28]. Across eight hospitals in eight countries, from high-income Toronto to low-income Ifakara, a 19-item checklist reduced inpatient mortality from 1.5% to 0.8% and complications from 11.0% to 7.0%. The mechanism was pure cognitive offloading. Items that a fatigued surgeon would otherwise have to retrieve from working memory, antibiotic timing, patient identity confirmation, site verification, were externalized to a piece of paper. The workspace was freed for the unpredictable aspects of the operation.

But the story has a second chapter that is equally important. In 2014, Urbach, Govindarajan, Saskin, Wilton, and Baxter published a different study in the same journal [29]. They analyzed 215,711 procedures across 101 hospitals in Ontario, Canada, where the checklist had been mandated by the government. The result? Mortality went from 0.71% to 0.65%, a non-significant change. Complication rates barely moved. The checklist had been implemented as a bureaucratic requirement, not as a team communication tool. Nurses ticked boxes. Surgeons continued operating. Nobody paused. Nobody briefed. The cognitive offloading mechanism was never activated because the team was not actually using the information on the checklist.

The lesson is precise. Cognitive offloading works, but only when the offloading is real. A checklist that sits on a clipboard is paper. A checklist that forces a team to stop, communicate, and verify is a cognitive tool. The underlying logic of working memory offloading is sound. Implementation determines whether lives are saved.

The same principle extends beyond surgery. Structured handoff tools like I-PASS and SBAR, clinical decision support alerts, and standardized order sets all serve the same cognitive function. They remove items from a 4-item mental workspace and place them in an unlimited external one.

Building Better Brains

Cognitive offloading removes items from the workspace. But there is a second strategy: making each item smaller. This is what expertise does. Through years of deliberate practice, a physician compresses dozens of individual clinical features into single illness script chunks. Each chunk occupies one slot. Four slots can now hold the information that used to require twenty.

K. Anders Ericsson formalized this process in his 1993 Psychological Review paper on deliberate practice [30]. The core requirements: focused engagement with specific subskills, immediate feedback, repetition at the edge of current ability, and explicit goals for improvement. McGaghie and colleagues demonstrated strong effects of deliberate practice on clinical skills, including central line insertion and advanced cardiac life support [31].

For diagnostic reasoning specifically, the most effective method is spaced retrieval practice: actively recalling information at increasing intervals. Each successful retrieval strengthens the schema, making it faster to access and more resistant to interference. Over time, retrieval becomes automatic. The diagnosis that once required five minutes of effortful System 2 analysis now appears in System 1 within seconds. Working memory is freed for the genuinely novel features of the case.

Simulation-based training extends this principle. By repeatedly rehearsing high-stakes scenarios in a controlled environment, trainees move components of the clinical response from effortful to automatic. McGaghie's mastery-learning research reports large effect sizes. The resident who has managed fifty simulated cardiac arrests does not need to deliberate about the ACLS algorithm. It runs automatically, freeing working memory for the unexpected finding that does not fit the pattern.

What Sleep, Exercise, and Mindfulness Actually Do

Three lifestyle factors affect the biological substrate of working memory. The evidence for each is real but uneven.

Sleep is the strongest. The residency studies and the Westbrook field data converge: protecting sleep protects working memory. Even partial sleep restriction degrades attention, working memory, and decision making, with vigilance the most sensitive domain [32]. Duty-hour regulations, strategic napping during extended shifts (supported by NASA fatigue countermeasure research), and disciplined sleep hygiene produce the largest gains for the least effort.

Exercise is moderately strong. Aerobic exercise increases brain-derived neurotrophic factor (BDNF), a protein essential for synaptic plasticity. Ishihara and colleagues published a large individual-participant-data meta-analysis in 2021 covering 109 studies [33]. The overall effect of acute aerobic exercise on executive functions including working memory was a standardized mean difference of 0.46, a moderate effect. Benefits were most pronounced in older adults and children. In younger adults the effects were smaller but consistent.

Mindfulness is the weakest and most contested. A 2025 systematic review and meta-analysis of 29 randomized trials found a medium effect size (Hedges' g approximately 0.44) for mindfulness interventions on working memory [34]. But study quality is variable. Quach, Mano, and Alexander's 2016 randomized trial found significant working memory gains with four weeks of mindfulness meditation in adolescents [35]. A more rigorous 2018 trial with an active control found no benefit after two weeks. The honest summary: longer practice, at least eight weeks, shows reliable small-to-moderate effects, especially in people who are stressed at baseline. This makes it plausibly useful for medical learners. Not a panacea.

A Timeline of Discovery

The story of working memory in clinical decisions did not unfold in a straight line. It emerged from the collision of cognitive psychology, neuroscience, and patient safety research over seven decades.

Each of these milestones added one piece to the puzzle. Miller showed the workspace was small. Baddeley showed it had parts. Goldman-Rakic showed where it lived. Arnsten showed what broke it. Croskerry showed how it failed in the clinic. And Westbrook showed what it cost in real patient harm.

The Debate That Will Not Die

The picture presented so far is cleaner than reality. Several debates remain unresolved.

First, is dual process theory an oversimplification? Norman and colleagues caution that the original strong claim, that System 1 is bias-prone and System 2 is corrective, does not survive scrutiny [15]. Rapid pattern recognition is often more accurate than slow analysis in experienced clinicians. Slowing down does not reliably reduce error. The relationship between speed, accuracy, and working memory load is more complex than the two-system framework suggests.

Second, does cognitive load theory in medical education (as formulated by Sweller and adapted by van MerriÔøΩnboer) apply cleanly to the chaos of real clinical environments [36]? The theory was developed for instructional design in controlled settings. The emergency department is not a controlled setting. Intrinsic, extraneous, and germane load blend together in ways that are difficult to measure or separate. Young, van MerriÔøΩnboer, Durning, and ten Cate acknowledged these limitations in their 2014 AMEE Guide [37].

Third, can individual working memory capacity be reliably measured and used to predict clinical performance? The Westbrook study found that higher-capacity physicians were somewhat protected against interruption-induced errors. But working memory capacity is not a fixed trait. It fluctuates with arousal, motivation, fatigue, and stress. A physician who scores high on the OSPAN on a rested Monday morning may score poorly at 3 a.m. on Saturday. Using trait-level measures to predict state-level performance remains problematic.

Fourth, will artificial intelligence change the equation? Clinical decision support systems already offload some of the cognitive work. If AI can reliably flag diagnostic possibilities that the physician's working memory has dropped, the human-machine combination may perform better than either alone. But automation bias, the tendency to accept computer-generated suggestions uncritically, introduces its own category of error. The human must still engage working memory to evaluate the machine's output. Whether this reduces or merely redistributes cognitive load is an open question.

What This Means for Medical Students, Doctors, and Hospitals

The science converges on a single principle: clinical decision-making is constrained by a small, biological, externally vulnerable cognitive workspace. The clinicians who reason best are not those with the largest workspace. They are those who have organized their long-term knowledge so well, and structured their environment so carefully, that the workspace is rarely overwhelmed.

For students preparing for licensing exams, this means building illness scripts before doing question banks. Each script is a single chunk that replaces eight or ten individual facts in working memory. The student who has a tight script for diabetic ketoacidosis can hold the full differential for altered mental status with Kussmaul breathing in one mental slot. It also means treating sleep as a study tool, not a luxury. The Trockel data and the Westbrook data apply equally to high-stakes test performance.

For practicing physicians, it means externalizing before internalizing. Write the differential. Use the order set. Run the checklist. Working memory is not expanded by determination. It is expanded by ink. It also means defending protected cognitive time. The Westbrook data justify a sterile cockpit rule for prescribing and complex documentation: no interruptions, no multitasking.

For hospitals and training programs, it means designing systems that accommodate human cognitive limits rather than pretending those limits do not exist. Cluttered electronic health record screens, redundant alerts, and idiosyncratic local protocols are tax on working memory that translates directly into error. The Haynes versus Urbach contrast is the lesson: checklists save lives when integrated into workflow, not when mandated as paperwork.

The work of becoming a safe and excellent physician is, in large part, the work of respecting the four-item ceiling.

Conclusion

The brain that diagnoses disease is the same brain that George Miller measured in 1956 and that Nelson Cowan refined in 2001. Its central workspace holds three to five items. It runs on dopamine along an inverted-U curve. It degrades under stress, sleep loss, and interruption. It improves through schema construction, cognitive offloading, and environmental design.

None of this is new to cognitive scientists. But the medical profession has been slow to absorb it. Training programs still produce residents who work through the night. Hospitals still interrupt physicians ten times per hour. Electronic health records still present information in ways that maximize, rather than minimize, extraneous cognitive load.

The evidence reviewed in this article suggests a different approach. Build the schemas early and maintain them with spaced retrieval. Offload the workspace deliberately and systematically. Protect the biological substrate with sleep, exercise, and stress management. Design clinical environments for the brain that exists, not the brain we wish we had.

Working memory in clinical decisions is not just a research topic. It is the invisible infrastructure of patient safety.

Frequently Asked Questions

What is working memory and how does it differ from short-term memory?

Working memory is a cognitive system that both stores and manipulates information temporarily. Short-term memory only stores information passively. Working memory includes an attention controller (central executive) that directs processing, making it the active workspace for reasoning, problem-solving, and clinical decision-making rather than simple information holding.

How many items can working memory hold at once?

Research by Nelson Cowan established that working memory can hold approximately 3 to 5 meaningful items when rehearsal and chunking strategies are blocked. George Miller's earlier estimate of 7 plus or minus 2 has been revised downward. Expert clinicians expand effective capacity through chunking, compressing multiple clinical features into single organized schemas.

Why do experienced doctors make fewer diagnostic errors than residents?

Experienced physicians build illness scripts, pre-compiled mental packages that compress many clinical features into single working memory chunks. fMRI studies show experts use less prefrontal cortex activation during clinical reasoning, meaning they rely less on working memory and more on efficient pattern recognition stored in long-term memory.

How do interruptions affect clinical decision-making?

A 2018 study of emergency physicians found that interruptions increased prescribing error rates by 2.82 times and multitasking increased errors by 1.86 times. Interruptions force working memory to switch contexts, dropping some information and potentially losing critical diagnostic details. Physicians averaged 7.9 interruptions per hour during observed shifts.

Can working memory capacity be improved?

Working memory capacity has a biological ceiling, but effective capacity can be increased through two strategies. First, building organized knowledge structures through deliberate practice and spaced retrieval compresses information into fewer chunks. Second, cognitive offloading through checklists, notes, and decision support tools moves items from the limited internal workspace to unlimited external storage.