Introduction

You changed your phone number three months ago. You know the new one. You can recite it on demand. But when filling out a form without thinking, your pen writes the old one. It happens automatically, as if the old number never left. That is proactive interference, and it is one of the two forces responsible for most of the forgetting that happens in daily life [1].

Now consider the opposite. You have been using a new password for two weeks. Try to recall the old one. It is gone. The new password has somehow erased access to the old. That is retroactive interference. Together, proactive interference vs retroactive interference accounts for more everyday forgetting than the passage of time ever could. For over a century, scientists have argued about this. They have run experiments on college students, on pond snails, on patients with damaged frontal lobes, and on fighter pilots switching cockpits. The conclusion is always the same: memories do not simply fade. They are murdered by other memories [2].

This is the story of how that discovery happened. It begins in a German university in 1900, passes through American psychology laboratories in the 1950s, enters the brain scanner in the 2000s, and ends in a 2019 experiment on a pond snail that proved interference operates at the level of single neurons.

The Monograph That Started Everything

The history of interference theory has a precise beginning. Göttingen, Germany. The year 1900. Georg Elias Müller, at that time arguably the most influential experimental psychologist in Europe, published a 300-page monograph with his student Alfons Pilzecker. The title was dry. The content was revolutionary.

Müller and Pilzecker had subjects memorize paired nonsense syllables, then either rest quietly or study a second list. When a second list intervened, recall of the first list collapsed. They called this "Rückwirkende Hemmung," or retroactive inhibition [3]. Müller used the broader term "associative Hemmung" as a blanket label, and when this term crossed the Atlantic, it confused American researchers for decades because it blurred the distinction between what we now call proactive and retroactive interference.

But Müller and Pilzecker noticed something else. Even when the interpolated task was completely different from the original material, like describing landscape paintings after studying syllables, forgetting still increased. This was strange. If interference came from confusion between similar materials, why would landscape descriptions ruin syllable recall? The answer would not arrive for over a century, until a team in Edinburgh revived this exact observation in 2007 [4].

The monograph also introduced the concept of memory consolidation. Müller and Pilzecker proposed that newly formed memories need time to "set," like concrete drying. Anything that disrupts this setting process, whether similar or dissimilar, can weaken the memory. This idea, largely ignored for decades, turned out to be correct.

Seven years earlier, John A. Bergström had actually conducted the very first interference experiment. Working at Clark University in 1893, Bergström timed subjects sorting 80-card decks into piles. When he rearranged the pile locations for a second sort, sorting slowed dramatically. When the items themselves changed, almost no slowdown occurred [5]. Bergström wrote that the interference came from "still persisting associations," not from their decay. But his work was eclipsed by Müller's massive German monograph, and Bergström never got the credit he deserved.

Two Students Who Slept in a Laboratory

In the fall of 1924, two graduate students at Cornell University, known in the literature only as "H" and "Mc," agreed to live inside a psychology laboratory. Their advisor, Karl Dallenbach, along with his colleague John G. Jenkins, wanted to test a simple question: does sleep protect memories from forgetting?

H and Mc memorized lists of nonsense syllables at different times of day, then either stayed awake going about normal activities or went to sleep. They were tested after one, two, four, and eight hours [6].

The results were dramatic. After eight hours of wakefulness, recall dropped to about 10%. After eight hours of sleep, recall stayed near 60%. The graph of sleep versus waking forgetting became one of the most reproduced figures in the history of psychology.

Jenkins and Dallenbach concluded with a sentence that would echo for a century: "Forgetting is not so much a matter of the decay of old impressions and associations as it is a matter of the interference, inhibition, or obliteration of the old by the new." Sleep protects memories not because it does something magical, but because it removes the source of damage: waking experience. Fewer new memories means fewer collisions with old ones.

What does this mean for real life? If you study vocabulary before watching television for two hours, you will remember less than if you study and then take a nap. Not because the nap strengthens memory (though it does, for separate reasons), but because television generates new input that interferes with what you just learned. The practical takeaway was hidden inside a 1924 paper for decades before anyone noticed.

The Paper That Overturned Seventy Years of Decay Theory

For most of the twentieth century, the dominant explanation for forgetting was decay. Memories fade over time like ink on paper. The forgetting curve that Hermann Ebbinghaus plotted in 1885 seemed to prove it: the longer you wait, the more you forget. Simple. Intuitive. Wrong.

In 1932, John A. McGeoch published a paper in Psychological Review that dismantled the decay idea with surgical precision. His argument: time itself does nothing. What happens during time does everything. "Ascription of effectiveness to time violates the usage of science and is logically meaningless," he wrote. Time is a container, not a cause. You do not say that iron rusts because time passes. You say it rusts because of oxygen, moisture, and electrochemistry. McGeoch said forgetting works the same way: it is caused by specific events, not by the clock [2].

But the decisive blow came twenty-five years later. In 1957, Benton J. Underwood at Northwestern University reanalyzed published data from sixteen different forgetting experiments. He noticed something nobody had seen. In every study, subjects who had previously learned many lists in the laboratory forgot more than subjects who had learned fewer prior lists. Underwood plotted the relationship: subjects with no prior lists forgot only about 25% over 24 hours. Subjects who had previously learned twenty or more lists forgot about 75% [1].

The Ebbinghaus curve was not showing pure decay. It was showing proactive interference from earlier experimental sessions. Ebbinghaus himself, who was his own only subject, had learned and relearned hundreds of syllable lists over months. His steep forgetting curve reflected the accumulated weight of all that prior learning pressing down on each new list. When you stripped away prior learning, forgetting shrunk dramatically.

Underwood's twelve-page paper transformed memory science overnight. Decay theory did not vanish entirely, and some researchers still argue for a time-based component. But from 1957 onward, interference replaced decay as the leading explanation for why people forget.

Unlearning: The Experiment That Split the Field

If old memories interfere with new ones (proactive interference) and new memories interfere with old ones (retroactive interference), a natural question arises: what exactly happens to the losing memory? Is it still there, just blocked? Or is it genuinely weakened?

In 1959, Jean M. Barnes and Benton Underwood designed an experiment to find out [7]. They used the standard AB-AC paradigm: subjects first learned a list of paired words (DAX-rabbit, JIK-tree), then learned a second list with the same cues but new responses (DAX-cloud, JIK-river). Then came the clever part. Instead of testing only one list, they gave subjects the A cues and asked them to recall both B and C responses, with unlimited time. No competition. No pressure to choose. Just produce whatever you can remember.

They called this the Modified Modified Free Recall, or MMFR. The logic was clean: if forgetting is purely a retrieval competition, then removing the competition should restore access to both memories. But if the first memory has been genuinely weakened by learning the second list, then even with unlimited time, B recall should decline.

B recall declined. As subjects practiced the A-C list more, their ability to produce B responses dropped, even when given infinite time and explicit permission to say both. Barnes and Underwood concluded that retroactive interference involves true "unlearning," not just retrieval failure. The first memory is actually degraded by learning the second.

This finding split the field. In 1968, Leo Postman, Kathy Stark, and Jean Fraser proposed an alternative: response-set suppression [2]. Perhaps subjects were not losing the B associations. Perhaps they were suppressing the entire B response set because the A-C task demanded it. The debate between true unlearning and retrieval failure continues to this day, though modern neuroscience has provided evidence that both mechanisms operate simultaneously.

The Wickens Release: Watching Interference Vanish

One of the most elegant demonstrations in memory science came from Delos Wickens in 1970. Wickens used the Brown-Peterson paradigm: subjects memorized three words, counted backward for several seconds to prevent rehearsal, then tried to recall. Simple enough. But Wickens added a twist [8].

On trial one, subjects recalled three words from a category, say professions: lawyer, doctor, teacher. Performance was high. On trial two, three more professions. Performance dropped. Trial three: more professions. Performance dropped further. This was textbook proactive interference building up across trials. The old words were interfering with the new ones.

Then, on trial four, Wickens changed the category. Fruits: apple, banana, grape. Recall shot back up to near trial-one levels. He called this "release from proactive interference," and it became one of the most powerful tools in cognitive psychology.

What made it powerful was not just the effect itself, but what it revealed about how memories are organized. Wickens showed release for taxonomic categories, for the shift between numbers and letters, for changes in abstractness, for masculine versus feminine connotations, and even for switches between languages [9]. The magnitude of release indexed the "categorical distance" between the old and new materials. The bigger the shift, the bigger the release. This meant memory encoded items along multiple dimensions simultaneously, and interference operated within each dimension.

Two years later, John Gardiner, Fergus Craik, and Janet Birtwistle at the University of Toronto ran the most ingenious version of this experiment. Subjects studied four trios of items from one category (garden flowers), and on trial four, the items shifted to a related subcategory (wild flowers). The critical manipulation: some subjects learned about the category switch at study, but others were told only at the moment of testing. Release from PI was equally strong in both conditions [9]. This proved that release is a retrieval phenomenon, not an encoding one. The brain stores the information either way. The difference is in how it searches for it.

What does this mean for studying? If you spend three hours memorizing anatomy terms and feel your recall collapsing, switching to biochemistry for thirty minutes can "release" the buildup. When you return to anatomy, the interference resets. The practical solution to proactive interference is strategic category switching, and Wickens proved why it works at the neural level.

The Brain Region That Fights Memory Collisions

For decades, interference research was purely behavioral. Scientists measured what subjects remembered and forgot, but could not look inside the brain to see what was happening. That changed with neuroimaging.

In 1995, Arthur Shimamura and his colleagues at the University of California, Berkeley, tested patients with frontal lobe damage on the classic AB-AC paradigm. These patients showed disproportionate impairment specifically on the first trial of the second list, exactly when proactive interference is strongest [10]. Their memory for individual lists was relatively normal. But when two similar lists collided, they could not sort out which was which.

Around the same time, Michael Leonard Smith and Brenda Milner at the Montreal Neurological Institute found that frontal-lobe patients, but not temporal-lobe patients, showed exaggerated PI buildup on a spatial version of the Wickens task [11]. The frontal lobes were not storing memories. They were policing the border between them.

The critical breakthrough came from David Badre and Anthony Wagner at Stanford. In 2005, using fMRI while subjects performed the Recent Probes task, a paradigm where subjects must reject a probe item that was on the previous trial's list but not the current one, they pinpointed interference resolution to the left ventrolateral prefrontal cortex, or VLPFC [12]. Specifically, they parsed the VLPFC into two subregions: an anterior part (Brodmann area 47) that supports controlled retrieval of stored representations, and a mid-VLPFC part (Brodmann area 45) that handles post-retrieval selection among competitors. When multiple memories are activated by the same cue, BA 45 decides which one is currently relevant and suppresses the others.

John Jonides and Derek Nee at the University of Michigan confirmed this in a 2006 review that synthesized dozens of imaging studies [13]. Left inferior frontal cortex activation appeared consistently whenever subjects needed to reject a familiar but currently irrelevant memory. The convergence was striking: lesion studies, fMRI, and even transcranial magnetic stimulation (TMS) all pointed to the same region. In 2006, Eva Feredoes, Giulio Tononi, and Bradley Postle applied brief TMS pulses to left IFG during the critical probe-evaluation window. Interference resolution collapsed. The causal evidence was complete [2].

Deeper Than the Cortex: Hippocampus, GABA, and Pond Snails

The prefrontal cortex resolves interference at the level of decision-making. But what protects memories at the level of storage?

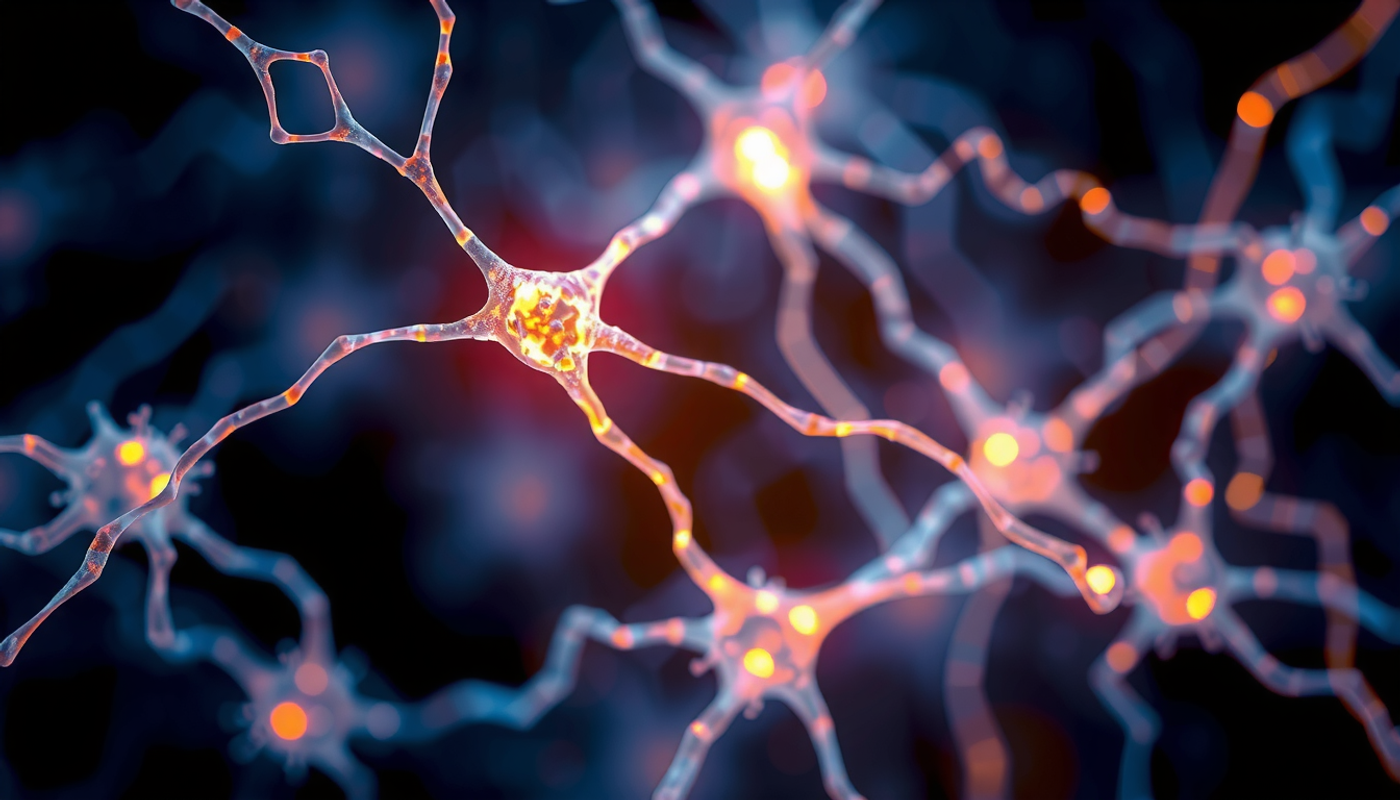

The hippocampus, a seahorse-shaped structure deep in the temporal lobe that acts as the brain's memory construction site, has a built-in anti-interference mechanism called pattern separation. In the dentate gyrus, a subregion of the hippocampus, similar incoming experiences are transformed into distinct neural codes. Think of it as giving two nearly identical suitcases different-colored tags at an airport. Without the tags, they would be confused. With them, they stay separate [14].

But pattern separation is only half the story. In 2019, a team at Oxford led by Renée Koolschijn used 7-Tesla MRI spectroscopy and transcranial direct current stimulation (tDCS) to measure GABA, the brain's primary inhibitory neurotransmitter, in the occipitotemporal cortex. They found that higher GABA concentrations predicted lower behavioral interference on a memory task. When they used tDCS to reduce GABA levels in that region, interference increased proportionally [15]. This was direct evidence that the brain uses chemical inhibition, at the level of local cortical circuits, to keep memories from bleeding into each other.

But perhaps the most remarkable interference experiment of recent years came not from a brain scanner but from a pond. Michael Crossley and colleagues at the University of Sussex studied the snail Lymnaea stagnalis, an animal with a nervous system simple enough that individual neurons can be identified and tracked. They trained snails on two consecutive associative learning tasks and asked a question no one had answered before: when two memories compete for consolidation, what determines which one survives [16]?

The answer was both timing and circuit overlap. When the second learning task occurred during a stable period of the first memory (after the initial consolidation window had closed), proactive interference happened only if both tasks used the same neural circuit, specifically the same cerebral giant cells. If the tasks used different circuits, both memories survived without interference. But when the second task occurred during a labile period of consolidation, about two hours after the first task, retroactive interference dominated. The new memory erased the old one regardless of circuit overlap.

This finding from a pond snail illuminated something that human studies could never isolate: interference is not just a retrieval problem at the cognitive level. It is a physical competition between neural circuits for consolidation resources. The snail's simplicity made visible what is hidden inside the complexity of the human brain.

Interference in the Real World: Eyewitnesses, Languages, and Aging Brains

Interference theory would be merely academic if it stayed in the laboratory. It does not.

In 1978, Elizabeth Loftus, David Miller, and Helen Burns at the University of Washington ran an experiment that changed how courts evaluate eyewitness testimony. Subjects watched a slideshow of a traffic accident involving a red Datsun at a stop sign. Later, a questionnaire contained a misleading question that mentioned a yield sign instead. On a subsequent forced-choice test, subjects who received the misleading information chose the yield sign significantly more often than control subjects [17]. New information (yield sign) had retroactively interfered with the original memory (stop sign). The misinformation effect, as Loftus called it, is textbook retroactive interference applied to the courtroom.

Language learning is another arena where interference is constant. When a native English speaker learns Spanish, their English vocabulary does not simply sit quietly in a separate compartment. It actively competes with Spanish during production. Bilinguals experience proactive interference when L1 words intrude during L2 speech, and retroactive interference when heavy L2 immersion weakens L1 access [18]. This is why expatriates who spend years speaking a second language often struggle to find native-language words when they return home. The new language has retroactively interfered with access to the old one.

Motor skills show the same pattern. When a tennis player switches to squash, the deeply grooved motor programs for tennis strokes interfere with learning squash strokes. This is proactive motor interference. Conversely, after months of squash practice, returning to tennis feels awkward because the squash patterns now retroactively interfere with the tennis ones. Battig identified this as "contextual interference" in 1966, and Shea and Morgan proved in 1979 that interleaved (random) practice, which maximizes interference during acquisition, actually produces better long-term retention and transfer than blocked practice [19]. The interference itself becomes a training signal.

Aging adds another dimension. Lynn Hasher and Rose Zacks proposed in 1988 that older adults are differentially vulnerable to interference because their inhibitory control weakens with age [20]. Cynthia Lustig, Cindy May, and Hasher tested this directly in 2001. They measured working-memory span using the standard operation span task but manipulated the direction of difficulty. When list lengths descended from long to short, reducing proactive interference buildup, older adults' span scores increased significantly. Reducing interference literally raised measured cognitive capacity. This suggested that much of what looks like age-related memory decline is actually age-related interference-resolution decline.

However, a more recent study by Kim Archambeau and colleagues in 2020 challenged this interpretation. Using the drift diffusion model, a computational framework that decomposes reaction times into distinct cognitive processes, they reanalyzed PI data from older and younger adults. Their conclusion: the apparent age-related deficit in interference control was largely driven by slower encoding and motor execution, not by a failure of the inhibitory process itself [21]. The inhibitory "engine" may be intact. The inputs and outputs are just slower. This debate remains unresolved.

In clinical populations, the picture is even more dramatic. Patients with traumatic brain injury paradoxically show less proactive interference on standardized memory tests, which researchers interpret as a failure to consolidate enough for old memories to interfere with new ones [22]. Adults with ADHD show reduced neural markers of interference resolution, specifically a diminished N200 component in ERP recordings during working-memory tasks [23]. And in early Alzheimer's disease, exaggerated sensitivity to semantic interference on the Loewenstein-Acevedo Scales (LASSI-L) has been identified as an early biomarker that distinguishes mild cognitive impairment from normal aging with approximately 90% accuracy [24].

How Other Memory Strategies Fight Back Against Interference

If interference is the enemy, the brain has allies. Several well-known learning strategies turn out to be, at their core, anti-interference mechanisms.

The spacing effect is the oldest. Distributing practice over time produces better long-term retention than massing it into a single session [25]. One reason spacing works: it reduces the mutual interference between study episodes. When you study the same material at 9 AM and again at 3 PM, each session occurs in a different temporal context. The distinct contexts, consistent with what memory researchers call the encoding specificity principle, help the brain file the two episodes separately rather than letting them collide. Massed practice, by contrast, creates nearly identical context for every repetition, increasing the chance that newer repetitions overwrite earlier ones.

The testing effect, also called retrieval practice, offers a different kind of protection. When you test yourself on material rather than simply restudying it, you remember more on a delayed test. Karl Szpunar, Kathleen McDermott, and Henry Roediger demonstrated in 2008 that interpolated tests between study lists dramatically reduce proactive interference buildup [26]. They called this the "forward testing effect." Testing acts like a mental bookmark, signaling to the brain that one episode is complete and the next is beginning. This helps the brain segregate lists in memory and reduces the intrusion of earlier material into later recall.

Karl-Heinz Bäuml and Oliver Kliegl at the University of Regensburg showed in 2013 that interpolated tests reduce response latencies for subsequent lists, which suggests that testing improves the speed of contextual list discrimination, not just accuracy [2]. However, a recent study by Lyu and McDermott raised the possibility that PI-reduction is a byproduct of testing rather than its cause. Testing may benefit learning even when PI is minimized, through mechanisms unrelated to interference.

Sleep consolidation is the third major defense. Building on Jenkins and Dallenbach's 1924 finding, Jeffrey Ellenbogen and colleagues at Harvard showed in 2006 that sleep specifically protects memories from retroactive interference introduced after the retention interval [27]. Subjects who slept between learning and interference testing were less susceptible to new material disrupting old. Sleep appears to move memories from a hippocampus-dependent, interference-vulnerable state to a neocortex-dependent, interference-resistant state. This process is what Susanne Diekelmann and Jan Born described as "active system consolidation" in their influential 2010 review [2].

Computational Models: How Machines Simulate Memory Collisions

The story of interference has a parallel track in computational modeling. Theorists have built mathematical models of memory that generate interference as an emergent property, not a bolted-on add-on.

The first major model was SAM, the Search of Associative Memory, developed by Jeroen Raaijmakers and Richard Shiffrin in 1981 [28]. In SAM, memories are stored as associations between items and a slowly drifting context. Recall involves sampling memories probabilistically based on the strength of their associations with the current retrieval cue. Interference emerges naturally: when multiple memories share the same cue, they compete for sampling. Stronger or more recent traces win, and weaker traces are less likely to be sampled. Gerard Mensink and Raaijmakers extended SAM in 1988 to account for a full range of interference phenomena including spontaneous recovery, MMFR, and the time course of forgetting [2].

Bennet Murdock took a different approach with TODAM, the Theory of Distributed Associative Memory, in 1982 [29]. In TODAM, all memories are stored in a single high-dimensional vector through convolution-correlation operations. Each new memory is added to the same composite vector, and retrieval involves correlating the cue with the composite. Interference occurs because the composite contains "noise" from every other stored item. The more items stored, the noisier the retrieval signal. TODAM elegantly predicted many interference effects but struggled with list-strength phenomena, generating a productive debate with Shiffrin's group in the early 1990s [30].

The most influential modern framework is the Temporal Context Model developed by Marc Howard and Michael Kahana in 2002 [31]. TCM represents each memory as bound to a slowly drifting internal context. When you try to recall something, you reinstall the context that was active when you encoded it. Items that share similar contexts are more likely to be co-retrieved, which explains contiguity effects. But shared context also means shared retrieval cues, which means competition, which means interference. The later CMR extension by Sean Polyn, Kenneth Norman, and Kahana incorporated semantic and source contexts, providing a unified account of free recall, list discrimination, and interference.

Archambeau and colleagues brought a different modeling tool to interference in 2020: the drift diffusion model. By decomposing reaction-time distributions into drift rate (evidence accumulation), boundary separation (caution), and non-decision time (encoding plus motor execution), they showed that what appears as a large interference effect in older adults' raw data largely reflects slower peripherals, not a slower central interference-resolution process [21]. The DDM approach illustrates how computational modeling can challenge long-held interpretations of behavioral data.

Where the Two Forces Meet: An Asymmetry Nobody Expected

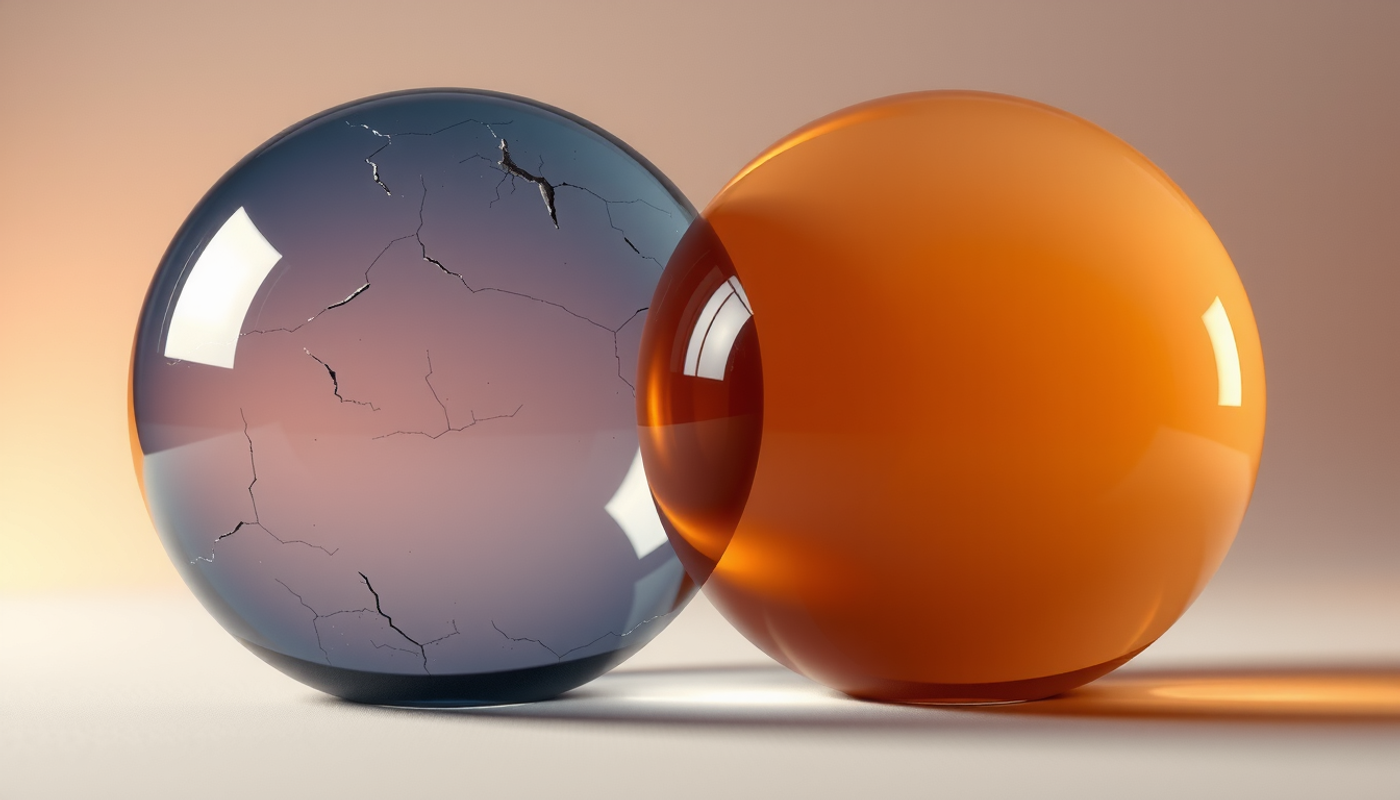

For most of the field's history, proactive and retroactive interference were treated as mirror images of the same process, differing only in direction. Old blocks new. New blocks old. Same mechanism, different timeline.

Recent research suggests this symmetry is an illusion. In 2025, Yahui Zhang, Weihai Tang, and colleagues at Tianjin Normal University used a non-competitive two-alternative forced-choice recognition test, removing explicit retrieval competition entirely, and found a striking asymmetry [32]. Retroactive interference manifested as reduced accuracy for the older A-B pairs. Proactive interference, however, manifested as slower reaction times for the newer A-C pairs without any accuracy loss. Same paradigm, same materials, but two qualitatively different effects.

This suggests that retroactive interference damages the original memory representation itself, possibly through encoding-based overwriting during the second learning episode. Proactive interference, by contrast, creates a retrieval-time selection problem: both traces are intact, but the older one slows down access to the newer one because it demands to be considered and then rejected.

The practical implication is striking. When you learn something new and it interferes with something old (retroactive), the old memory may be genuinely degraded. But when something old interferes with something new (proactive), the new memory may be perfectly intact. You just need more time and effort to get to it. The old information is not destroying the new one. It is merely standing in the way.

What It All Means

A century of research on proactive interference vs retroactive interference has produced a conclusion both simple and profound. Forgetting is not passive. It is not entropy. It is not the slow dissolution of memory traces in the acid bath of time. Forgetting is an active process driven by collisions between memories that share retrieval cues, encoding contexts, or neural circuits [33].

Every time you learn a new password, the old one gets harder to reach. Every time you park in a new spot, the old spot fades. Every time a witness hears a misleading detail about an accident, the original memory shifts. And every time a medical student learns a new drug interaction, it presses against the thousand interactions already stored.

The brain is not defenseless. It has the prefrontal cortex to adjudicate between competitors, the hippocampus to separate similar patterns, GABA to chemically insulate neighboring traces, sleep to consolidate memories into interference-resistant form, and retrieval practice to create mental bookmarks between episodes. But these defenses have limits. And understanding those limits, understanding exactly how proactive interference vs retroactive interference operates, is the first step toward learning more effectively.

The irony is beautiful: the same mechanism that causes forgetting is also the mechanism that makes learning possible. Without the ability to overwrite old habits with new ones (retroactive interference), you could never adapt. Without the persistence of old knowledge pressing against new input (proactive interference), you would have no stable foundation of expertise. Interference is not a bug. It is the price of a memory system designed not for perfect recording, but for flexible survival in a changing world.

Frequently Asked Questions

What is the difference between proactive and retroactive interference?

Proactive interference occurs when older memories disrupt the recall of newer information. Retroactive interference is the opposite: newly learned information disrupts the recall of older memories. Both forms involve competition between memory traces that share similar retrieval cues, and research since 1957 has shown they account for most everyday forgetting.

Which is more common, proactive or retroactive interference?

Research suggests retroactive interference is more frequently observed in laboratory settings and is generally considered the more problematic form. However, Benton Underwood's 1957 analysis showed that proactive interference from prior learning sessions accounted for the majority of long-term forgetting in verbal learning experiments, making both forms significant.

How can you reduce proactive and retroactive interference when studying?

Three evidence-based strategies reduce interference: spacing practice sessions apart in time to create distinct temporal contexts, using retrieval practice (self-testing) between study blocks to create mental boundaries, and sleeping after learning to consolidate memories into interference-resistant form. Switching between dissimilar subject categories also releases accumulated proactive interference.

Does proactive interference get worse with age?

Behavioral data consistently shows older adults are more susceptible to proactive interference. Hasher and Zacks attributed this to declining inhibitory control. However, Archambeau and colleagues (2020) used computational modeling to argue that the inhibitory mechanism itself may remain intact, with the apparent deficit driven by slower encoding and motor processes rather than weakened interference resolution.

Can interference actually help learning?

Yes. Contextual interference, or interleaving, occurs when related but distinct tasks are mixed during practice rather than blocked. This increases errors during acquisition but produces better long-term retention and transfer. The interference itself acts as a training signal that forces the brain to develop stronger and more distinct memory representations.