Introduction

In a quiet apartment in Berlin, sometime around 1879, a thirty-year-old man sat alone at a table with a metronome, a notebook, and a list of meaningless syllables. No laboratory. No assistants. No funding. Just a man trying to prove that the most elusive thing about the human mind could be caught, pinned down, and graphed on paper. His name was Hermann Ebbinghaus. And what he found would reshape how the world thinks about learning, memory, and forgetting [1].

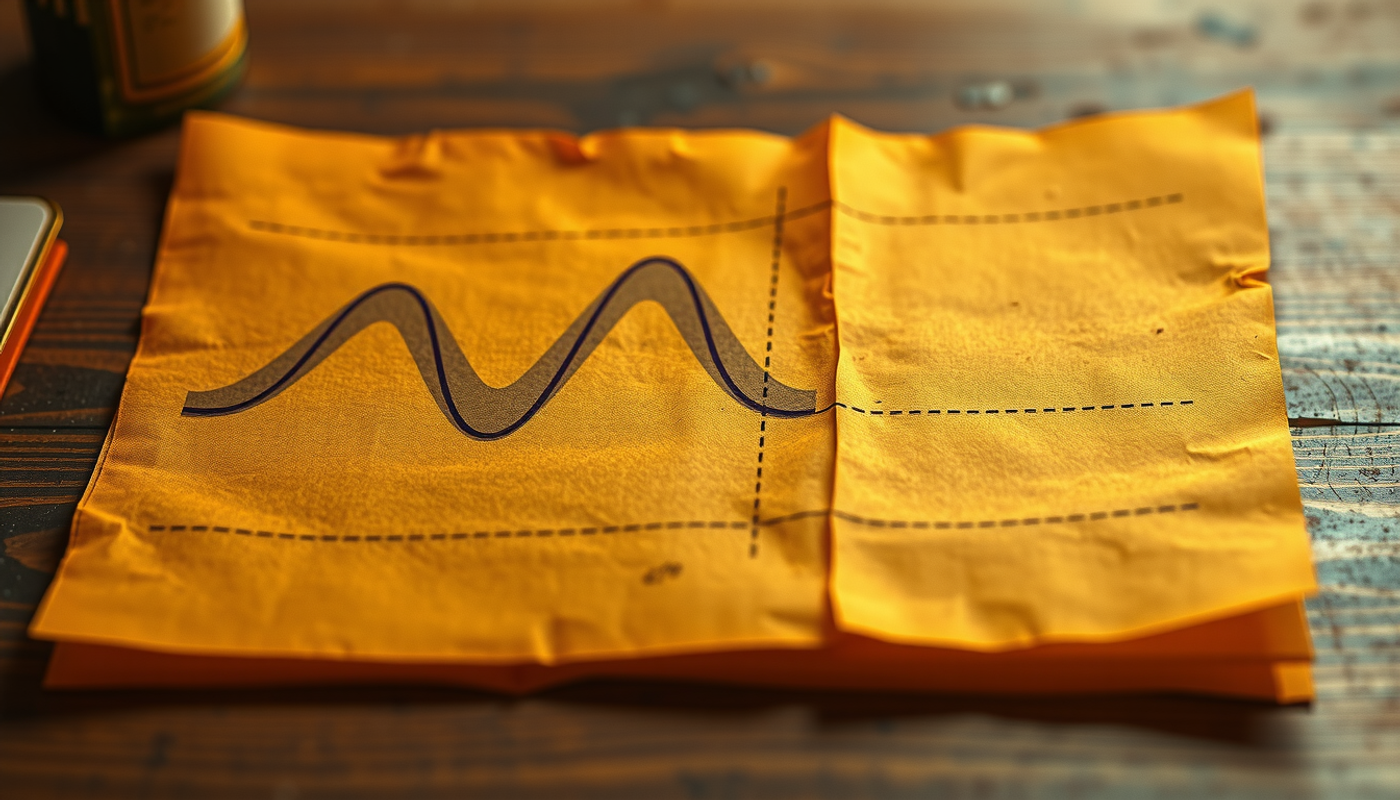

The forgetting curve is, at first glance, a simple idea. Learn something new. Wait. Measure how much you still remember. Plot it. The result is a line that drops fast and then flattens. Most of what you learned vanishes within hours. What survives the first day has a fighting chance of lasting weeks. It sounds obvious now. But before Ebbinghaus, nobody had ever demonstrated this with data. Memory was philosophy. He turned it into science.

This article tells the story of that transformation. It traces the forgetting curve from Ebbinghaus's apartment to modern neuroscience laboratories where researchers can now watch individual synapses weaken and molecular machines actively erase memories. It asks why forgetting happens, whether it is a flaw or a feature, and what 140 years of research have revealed about the shape of the curve and the biology behind it [2].

The Man Who Measured the Unmeasurable

Hermann Ebbinghaus was born on January 24, 1850, in Barmen, a small industrial town in the Rhine Province of Prussia. His father was a merchant. Nothing in his early life suggested he would become one of the most important psychologists in history. He studied history and philology at the University of Bonn, served briefly in the Franco-Prussian War, and returned to finish a doctorate in philosophy in 1873, at age twenty-three [3].

Then he disappeared into private tutoring for several years, wandering between England and France. And somewhere during those wandering years, in a secondhand bookshop in Paris, he stumbled onto a copy of Gustav Theodor Fechner's *Elemente der Psychophysik* [4].

Fechner had done something that seemed impossible. He had taken sensation, the subjective experience of brightness or weight or pitch, and turned it into numbers. The Weber-Fechner law showed that the relationship between physical stimulus and perceived intensity follows a mathematical function. Ebbinghaus read this and had a thought that would change his life: if sensation could be measured, why not memory?

The timing matters. Wilhelm Wundt had just opened the world's first experimental psychology laboratory in Leipzig in 1879 [5]. Psychology was being born as a science. But Wundt had drawn a boundary. He argued in his *Grundzüge der physiologischen Psychologie* that the "higher" mental processes, memory among them, were beyond the reach of experiment. They belonged to cultural analysis, not the laboratory.

Ebbinghaus never trained under Wundt. He had no laboratory, no students, no institutional support. He had only Fechner's book and an idea. And he decided to prove Wundt wrong.

Two Thousand Syllables and a Metronome

The method Ebbinghaus invented was brilliant in its simplicity and brutal in its demands.

He needed material to memorize that carried no prior associations. If he used real words, his existing knowledge would contaminate the results. A German word like *Haus* would be easier to remember than *Pferd* simply because of its frequency, its emotional associations, its connections to other words. He needed a blank slate.

So he invented nonsense syllables. Consonant-vowel-consonant combinations like ZUC, QAX, WID, and DAX. He constructed approximately 2,300 of them [6]. Each was written on a separate card. Lists of thirteen syllables were assembled. And then Ebbinghaus sat down with his metronome, set it to a precise tempo, and began to memorize.

The procedure was rigid. He read each list aloud at a rate of 0.4 seconds per syllable, using the metronome to keep time. He repeated the list until he could recite it perfectly twice in a row without error. He recorded how long this took. Then he waited. Twenty minutes. An hour. Nine hours. A day. Two days. Six days. Thirty-one days. And then he sat down again and relearned the same list to the same criterion.

The key measurement was what he called *savings*. If a list originally took him ten minutes to learn and only six minutes to relearn, then four minutes had been "saved." That represented a 40% savings score, meaning 40% of the original learning was still present in memory [2].

He did this thousands of times. Between 1879 and 1880, and again between 1883 and 1884, he served as his own and only test subject. He controlled for time of day, for fatigue, for mood, for the order of lists. He was, in effect, running a clinical trial on himself.

The results were published in 1885 as *Über das Gedächtnis: Untersuchungen zur experimentellen Psychologie* (Memory: A Contribution to Experimental Psychology) [7]. It was dedicated to Gustav Fechner.

The pattern was unmistakable. Memory dropped sharply in the first hour, continued to fall through the first day, and then leveled off. After a month, roughly 80% of the original learning had vanished. But 20% survived. Something was retained, stubbornly, even without any review.

This was the forgetting curve. The first quantitative law of a higher mental process.

What the Curve Actually Means

The forgetting curve is often misunderstood. Popular sources frequently state that "we forget 50% within an hour" or "90% within a week." These numbers are not quite right, and the confusion comes from mixing up savings scores with free-recall accuracy.

Ebbinghaus measured savings, not recall. A savings score of 44.2% at one hour does not mean he remembered 44.2% of the syllables. It means relearning took 44.2% less time than original learning. The two measures are related but not identical.

The mathematical form of the curve has been debated for over a century. Ebbinghaus himself fitted his 1885 data with a logarithmic function: b = 100k / (log(t)^c + k), where b is savings, t is time in minutes, c = 1.25, and k = 1.84 [6]. In his earlier 1880 manuscript he had tried a power function instead.

Modern researchers have pushed this question further. John Wixted and Ebbe Ebbesen at UC San Diego published a landmark study in 1991 showing that across three very different memory tasks, including word recall, face recognition, and pigeon delayed matching-to-sample, a simple power function (R = at^-b) provided a better fit than exponential, logarithmic, hyperbolic, or linear models [8].

This matters because the popular formula R = e^(-t/S), which appears in countless blog posts and textbook summaries, does not actually fit most data sets very well. The forgetting curve is real. Its shape is well-established. But the equation most people associate with it is an oversimplification.

A still more recent challenge came from Nate Kornell and Robert Bjork in 2025. In a paper published in *Behavioral Sciences*, they argued that empirical forgetting curves do not directly reflect the decay rate of individual memories. Instead, the observed curve is an emergent property of many memories with different strengths crossing a recall threshold at different times [9]. A group-level curve can appear to follow a power law even if individual items decay exponentially, simply because of variation in initial encoding strength. The forgetting curve, they suggest, is partly a statistical shadow.

One Hundred and Thirty Years Later, the Same Result

Does the forgetting curve hold up? The most direct answer came in 2015, when Jaap Murre at the University of Amsterdam and Joeri Dros replicated Ebbinghaus's experiment with remarkable fidelity.

Dros, a twenty-two-year-old student, spent seventy hours over seventy-five days memorizing and relearning lists of nonsense syllables constructed to follow Dutch phonotactics. The lists were thirteen syllables long. The retention intervals matched Ebbinghaus's: twenty minutes, one hour, nine hours, one day, two days, and thirty-one days. The presentation rate was the same. The criterion was the same [2].

The forgetting curves Dros produced were nearly identical to Ebbinghaus's original data. One hundred and thirty years had passed. The subject was a different person, speaking a different language, in a different century. And the curve had the same shape.

But Murre and Dros noticed something Ebbinghaus could not have explained. At the twenty-four-hour data point, there was a small but consistent upward jump in savings. Memory after a night of sleep was slightly better than the smooth curve would predict. The researchers attributed this to sleep-dependent memory consolidation, a phenomenon that Ebbinghaus's contemporary science had no framework to understand but that modern neuroscience has studied extensively.

Why the Brain Forgets: Five Theories, One Truth

Ebbinghaus described what happens. He did not explain why. That question has occupied memory scientists for over a century, and five distinct theories have emerged. None is wrong. All capture part of the picture.

The oldest is trace decay theory. The idea is intuitive: memories are physical traces in the brain, and without use, they simply fade. Like footprints in sand. Edward Thorndike formalized this as the "law of disuse" in the early 1900s. But in 1932, John McGeoch published a devastating critique in *Psychological Review*, arguing that time itself does nothing to memory [10]. What happens during time is what matters. And what happens is interference.

Interference theory became the dominant framework for decades. The core insight is that memories compete. Proactive interference occurs when old memories disrupt recall of new ones. Retroactive interference occurs when new memories disrupt recall of old ones. Benton Underwood reanalyzed laboratory forgetting data in 1957 and argued that much of what looked like natural decay was actually proactive interference from previously learned lists [10]. The more lists a subject had memorized in prior experiments, the faster they forgot the current one.

Jenkins and Dallenbach demonstrated this beautifully in 1924. Subjects who slept after learning retained far more than subjects who stayed awake for the same period. Sleep reduces retroactive interference because fewer new experiences are encoded during sleep [6].

Retrieval failure theory shifted the focus from storage to access. Endel Tulving and Donald Thomson proposed the encoding specificity principle in 1973: a memory can only be retrieved if the cues present at retrieval match the cues that were encoded with the memory [11]. The memory is still there. You just cannot find it. Godden and Baddeley's famous 1975 experiment showed this concretely. Divers who learned word lists underwater recalled them better underwater than on land, and vice versa.

Motivated forgetting adds an intentional component. Michael Anderson and Collin Green showed in 2001 that people can deliberately suppress unwanted memories. In their Think/No-Think paradigm, participants learned word pairs and were then trained to either think of the target or actively suppress it when shown the cue [12]. Suppressed items were later harder to recall even with independent cues, suggesting that the memory trace itself had been weakened.

Consolidation failure is the fifth framework. James McGaugh, in a series of papers culminating in a 2000 *Science* review, showed that new memories pass through a fragile consolidation window during which they must be stabilized through protein synthesis, synaptic remodeling, and eventually systems-level transfer from hippocampus to cortex [13]. If this process is disrupted by interference, stress, or lack of sleep, the memory never makes it to long-term storage.

Modern neuroscience increasingly treats these theories as complementary mechanisms rather than competing explanations. A memory can be lost through decay of its synaptic substrate, through interference from competing traces, through loss of retrieval cues, through active suppression, or through failed consolidation. And often, several of these operate simultaneously.

The Molecular Machinery of Forgetting

For most of the twentieth century, forgetting was studied purely through behavior. People learned things, forgot things, and psychologists measured the gap. But starting in the 1990s, neuroscientists began opening the black box.

The story begins with long-term potentiation, or LTP. In 1973, Timothy Bliss and Terje Lømo discovered that brief, high-frequency stimulation of a neural pathway produces a lasting increase in the strength of synaptic transmission [14]. This is the closest thing science has found to a cellular memory trace. When you learn something, specific synapses get stronger. LTP is that strengthening.

But LTP decays. And the decay is not passive.

Oliver Hardt, Karim Nader, and Yadin Wang proposed in 2014 that the natural forgetting of both short-term and long-term memories is driven by the removal of a specific type of receptor from the synapse. These are AMPA receptors containing the GluA2 subunit. During LTP, more of these receptors are inserted into the postsynaptic membrane, making the synapse more responsive. During forgetting, they are pulled back inside the cell through a process called endocytosis [15].

The critical experiment came from Migues and colleagues in 2016. They showed that if you pharmacologically block GluA2-AMPA receptor removal in the hippocampus of rats, you prevent the animals from forgetting. Object-location memories that would normally fade over days remained intact [16]. Forgetting, at the molecular level, is an active enzymatic process. The cell is not passively losing its memory. It is actively dismantling it.

On the other side of this molecular tug-of-war sits PKMζ (protein kinase M zeta), an enzyme that works to keep AMPA receptors at the synapse. Todd Sacktor's laboratory showed that PKMζ is a constitutively active kinase whose persistent activity maintains potentiated synapses [17]. Memory maintenance, in this view, is not a static state. It is a continuous battle between forces that insert receptors and forces that remove them.

The Brain That Actively Erases

The molecular story gets stranger. Forgetting is not just about receptors falling off synapses. The brain has dedicated forgetting circuits.

Ron Davis and Yi Zhong at the Scripps Research Institute and Tsinghua University, respectively, spent two decades studying forgetting in *Drosophila*, the fruit fly. Their work, summarized in a 2017 *Neuron* review, revealed a specific molecular pathway for what they call "intrinsic forgetting" [18].

Here is how it works. In the mushroom body, the fly's memory center, a small group of dopaminergic neurons function as "forgetting cells." After a memory is formed, these neurons release dopamine onto the engram cells that hold the memory. The dopamine activates a receptor called DAMB, which triggers a small GTPase protein called Rac1. Rac1, in turn, activates cofilin, a protein that breaks down the actin cytoskeleton. Since the structural integrity of synapses depends on actin, the memory trace is physically dismantled [19].

This is not a metaphor. The brain has molecular scissors specifically designed to cut the structural supports of memories.

Does this extend beyond fruit flies? Yes. Liu and colleagues showed in 2016 that hippocampal Rac1 activation regulates forgetting of object recognition memory in mice. And Paul Bhatt Frankland and Sheena Josselyn at the Hospital for Sick Children in Toronto added another twist. In 2014, publishing in *Science*, they showed that hippocampal neurogenesis, the birth of new neurons in the dentate gyrus, actually causes forgetting [20].

The experiment was elegant. When they artificially increased neurogenesis in adult mice after memory formation, the mice forgot faster. When they suppressed neurogenesis in infant mice, where it is naturally very high, the infants retained memories that they would normally have lost. This, they argued, explains infantile amnesia, the near-universal inability to remember events from early childhood. Babies are not bad at forming memories. They are too good at making new neurons, and those new neurons restructure the hippocampal circuits where memories were stored [21].

Sleep: The Editor That Decides What Stays

If the brain has active forgetting machinery, what protects important memories from being erased?

Part of the answer is sleep.

Giulio Tononi and Chiara Cirelli at the University of Wisconsin-Madison proposed the synaptic homeostasis hypothesis, or SHY, in 2006. The idea is elegant [22]. During waking hours, learning strengthens synapses across the brain. By the end of the day, the total synaptic load is higher than at the start. This costs energy, increases neural noise, and eventually saturates the system's capacity to encode new information. Sleep, specifically slow-wave sleep, renormalizes synaptic weights. Weak synapses are scaled down. Strong synapses survive. The result is selective forgetting: trivial experiences are pruned while important ones are preserved.

Susanne Diekelmann and Jan Born at the University of Tübingen provided the complementary framework. In a 2010 review in *Nature Reviews Neuroscience*, they described how slow oscillations, sleep spindles (brief bursts of 12-15 Hz activity), and hippocampal sharp-wave ripples form a nested temporal hierarchy during sleep [23]. The slow oscillation comes first, creating windows of high cortical excitability. The spindle rides on top. And the ripple, a very fast oscillation of 140-200 Hz originating in the hippocampus, nests inside the spindle. During each ripple, the hippocampus replays compressed versions of the day's experiences. If this replay coincides with a cortical spindle during a slow-oscillation up-state, the memory is transferred to neocortical storage. If not, it fades.

This is why the Murre and Dros replication found a small upward jump in the forgetting curve at the twenty-four-hour mark. Sleep had selectively rescued some memories that would otherwise have continued to decay.

What Bends the Curve

The forgetting curve Ebbinghaus described is a baseline, not a destiny. Several factors systematically change its shape.

Meaningfulness. Ebbinghaus himself noted that meaningful material, such as stanzas of *Don Juan*, was retained about ten times better than nonsense syllables [6]. Tse and colleagues at the University of Edinburgh showed why in a 2007 *Science* paper: when rats already had a schema for flavor-place associations, new memories consistent with that schema were consolidated into cortex within a single trial, bypassing the gradual hippocampal consolidation process [13]. Prior knowledge flattens the curve because it provides a scaffolding onto which new information can attach.

Emotional salience. James McGaugh's decades of research showed that emotional arousal at the time of encoding triggers the release of stress hormones that, through beta-adrenergic receptors in the amygdala (an almond-shaped structure deep in the temporal lobe that processes fear and emotional memory), enhance consolidation in hippocampal and cortical circuits [24]. This is why you remember where you were when you heard shocking news but cannot recall what you had for lunch three days ago. Emotional memories resist the forgetting curve.

Stress. The relationship between stress and memory follows an inverted U. Moderate cortisol at encoding enhances consolidation. But high or chronic cortisol impairs both encoding and retrieval [24]. The same stress hormone that helps you remember a near-accident can, when chronically elevated, accelerate forgetting of everything else.

Aging. Wearn and colleagues published a study in 2020 showing that "accelerated long-term forgetting," where people perform normally on tests thirty minutes after learning but forget abnormally fast over subsequent weeks, predicts cognitive decline in healthy older adults better than standard clinical memory tests [25]. The forgetting curve, it turns out, can serve as an early warning system for neurodegeneration.

Fighting the Curve: Spaced Repetition and the Testing Effect

Ebbinghaus did not just discover the problem. He also found the beginning of a solution.

In Chapter VIII of *Über das Gedächtnis*, he observed that distributing practice across days produced more durable retention than massing it into a single session. This was the first documented observation of the spacing effect [1].

The most authoritative modern confirmation came from Nicholas Cepeda, Harold Pashler, Edward Vul, John Wixted, and Doug Rohrer. In 2006, they published a meta-analysis in *Psychological Bulletin* covering 184 articles, 317 experiments, and 839 assessments of the spacing effect [26]. The conclusion was unambiguous: spaced practice consistently outperforms massed practice across every age group, every type of material, and every testing delay. A follow-up study in 2008 tested 1,354 participants over retention intervals up to a year and found that the optimal spacing gap is roughly 10 to 20 percent of the desired retention interval. Want to remember something for a week? Review it after one day. Want to remember it for a year? Review it after three to five weeks.

The second weapon against the curve is retrieval practice. Jeffrey Karpicke and Henry Roediger III published a study in *Science* in 2008 that demonstrated this with striking clarity [27]. Students learned foreign-language vocabulary pairs. Once they could correctly produce a translation, some continued to study the pair while others were simply tested on it repeatedly. One week later, the testing group vastly outperformed the studying group. And here is the uncomfortable part: students predicted the opposite. They expected that additional study would help more than additional testing.

Robert and Elizabeth Bjork at UCLA provided the theoretical framework in 1992 with their New Theory of Disuse. They distinguished between two properties of every memory: storage strength, which accumulates with learning and never declines, and retrieval strength, which reflects current accessibility and fluctuates [6]. The critical insight is this: when retrieval strength is low, the act of successfully retrieving a memory produces a larger boost to storage strength. In other words, forgetting a little before reviewing actually helps you remember longer. Each successful retrieval resets the forgetting curve, but at a higher and flatter baseline.

This is the principle behind every modern spaced repetition system. Each review is timed to occur just before the memory would otherwise be lost. And each review makes the next forgetting slower.

Why Forgetting Is Not a Bug

It is tempting to see forgetting as a failure. A leaky bucket. A design flaw. But modern cognitive science has increasingly come to see it as a feature, not a bug.

The computational argument is straightforward. Michael McCloskey and Neal Cohen showed in 1989 that artificial neural networks trained sequentially on multiple tasks suffer from catastrophic forgetting. New learning overwrites old learning because the same parameters are updated for different tasks [28]. Biological brains do not have this problem, and one reason is that they forget strategically. By weakening irrelevant connections, the brain reduces interference and keeps old and new knowledge from colliding.

Blake Richards and Paul Frankland made this argument explicitly in a 2017 *Neuron* paper titled "The Persistence and Transience of Memory." They proposed that the goal of memory is not to preserve a faithful record of the past but to support intelligent decision-making in the future. Forgetting the details while retaining the gist is exactly what enables generalization [18]. A child who remembers every individual dog they have ever seen but cannot form the abstract concept "dog" would be at a disadvantage.

John Anderson and Lael Schooler at Carnegie Mellon provided an ecological argument in 1991. They analyzed the statistical structure of information in real-world environments, examining word frequencies in newspaper headlines, email usage patterns, and library borrowing records. In every case, the probability that a piece of information would be needed declined as a function of time in a way that closely matched the power-law form of human forgetting [6]. The brain forgets at approximately the rate at which information becomes statistically irrelevant. Forgetting, in this view, is rational.

The Curve That Changed a Science

Ebbinghaus died on February 26, 1909, of pneumonia, in Halle, Germany. He was fifty-nine years old. He had published remarkably little beyond his landmark monograph, a textbook, and a handful of papers. He never built the kind of research empire that Wundt assembled at Leipzig. He had no famous students to carry his work forward.

And yet his influence is everywhere.

The savings method he invented was the standard measure of memory for fifty years. The nonsense syllable he created was used in thousands of experiments across dozens of laboratories. The forgetting curve itself became one of the most reproduced findings in all of psychology. And the spacing effect he documented in Chapter VIII became the foundation of an entire industry of learning technology.

But his deepest contribution may be the simplest one. He showed that the mind obeys laws. That the feeling of forgetting, the frustration of losing a name or a fact or a skill, is not random. It is patterned. It is predictable. And what is predictable can be managed.

The forgetting curve is not just a graph in a textbook. It is a map of a universal human experience, drawn by a man who sat alone in a Berlin apartment with a metronome and refused to accept that memory was beyond the reach of science.

Conclusion

The forgetting curve began as one man's experiment and became one of psychology's most enduring discoveries. From Ebbinghaus's nonsense syllables to Murre and Dros's faithful replication, from Wixted's mathematical analyses to Hardt's molecular mechanisms, from Davis's fly-brain forgetting circuits to Frankland's neurogenesis findings, the science of forgetting has grown enormously while the original curve has remained remarkably stable.

What has changed is the interpretation. Forgetting is no longer seen as mere decay. It is an active, regulated, and adaptive process with identifiable molecular substrates, dedicated neural circuits, and clear evolutionary logic. The brain does not fail to remember. It chooses what to forget.

And the most powerful antidote to forgetting, spaced repetition combined with retrieval practice, works precisely because it exploits the curve rather than fighting it. Each well-timed review forces the brain to work harder at retrieval, which strengthens the memory trace and flattens the next curve. The enemy of memory, it turns out, is also its trainer.

Ebbinghaus could not have imagined any of this. He could not have known about synapses, dopamine, or hippocampal neurogenesis. But he understood, with extraordinary clarity, the first and most essential truth: memory is lawful. Forgetting follows a pattern. And patterns can be understood.

Frequently Asked Questions

What is the forgetting curve?

The forgetting curve is a model of memory retention first described by Hermann Ebbinghaus in 1885. It shows that newly learned information is lost rapidly in the first hours and days, then more slowly over time. Without review, roughly 80% of learned material is forgotten within a month.

How fast do we forget new information?

According to Ebbinghaus's original data, about 42% of new information is lost within 20 minutes. After one hour, about 56% is gone. By the end of one day, approximately two-thirds has vanished. The rate of loss slows after the first day but continues over weeks.

Is the forgetting curve the same for everyone?

The general shape of the curve is consistent across individuals and has been replicated across cultures and languages. However, the rate of forgetting varies depending on meaningfulness, emotional significance, prior knowledge, sleep quality, stress levels, and whether spaced repetition is used.

Can you prevent forgetting entirely?

Complete prevention of forgetting is neither possible nor desirable, since forgetting serves adaptive functions like reducing interference and supporting generalization. However, spaced repetition and retrieval practice can dramatically flatten the curve, extending retention from days to months or years with minimal review.

What is the best way to beat the forgetting curve?

Research consistently shows that the most effective strategy combines spaced repetition (reviewing material at increasing intervals) with active retrieval practice (testing yourself rather than re-reading). A meta-analysis of 184 studies confirmed that distributed practice outperforms massed practice across all populations and material types.