Introduction

A six-month-old infant is sitting on her mother's lap, listening to the sounds around her. No one explains what a verb is. No one puts a conjugation table in front of her. No one even speaks more slowly. And yet, by age three, this child will know about a thousand words, construct multi-clause sentences, distinguish past from future tense, and follow grammatical rules that most adults cannot explain [1]. In that same period, a smart, motivated adult who has spent all their time studying a second language still fumbles ordering food at a restaurant. Why? What does the infant brain have that the adult brain lacks? And conversely, what does the adult brain have that gets in the way?

This article is the story of one of the most complex capabilities known in nature: the human brain's ability to learn language. A story that begins in linguistics laboratories, passes through cognitive psychology, and descends into the depths of neurons and synapses. A story with real characters — from Noam Chomsky to a thirteen-year-old girl named Genie who never got the chance to speak, from deaf children in Nicaragua who built a new language from nothing to Swedish soldiers whose brains physically grew after three months of intensive language training [2]. And a story with unresolved questions — is there really a biological window that closes? Does the language you speak reshape your thinking? And does being bilingual truly sharpen your brain, or is that just a scientific myth?

The Infant Who Was Born a Statistician

No one teaches a newborn where one word ends and the next begins. In written language, spaces do that job. But in natural speech, there are no spaces. Words blend into each other like overlapping waves with no clear boundaries between them. Adults do not notice this because their brains automatically segment words — but if you listen to a language you don't know, you hear a continuous stream of sound and cannot tell where one word starts and another ends.

Infants face the same problem. With one difference: they solve it.

In 1996, Jenny Saffran, Richard Aslin, and Elissa Newport at the University of Rochester designed an experiment that shook the field of linguistics [3]. They sat eight-month-old infants on their parents' laps in a laboratory and played a monotonous stream of nonsense syllables from a speaker. No pauses. No changes in intonation. No acoustic cues whatsoever. Just syllables in sequence: "bidakupadotigolabubidakugolabupadoti..." Duration: two minutes. The only clue hidden in this stream was statistical — the probability that the syllable "da" followed "bi" was one hundred percent (because "bidaku" was a "word"), but the probability that "ku" was followed by "pa" was only thirty-three percent (because that was a word boundary).

After just two minutes of listening, the infants were tested. The result: they distinguished "words" from "non-words." An eight-month-old brain that could not yet produce a single word had computed the transitional probabilities between syllables. Without instruction. Without reward. Without feedback. Purely through exposure to statistical patterns. Saffran and colleagues called it "statistical learning" and demonstrated that human infants are born with computational power that software engineers would envy.

But statistical learning is only part of the story. Patricia Kuhl at the Institute for Learning and Brain Sciences at the University of Washington spent decades studying what she calls "the magic of the first year" [1]. Between birth and twelve months, something astonishing happens to an infant's phonetic perception. A newborn is a "citizen of the world" linguistically — able to distinguish all the sounds of all the world's languages. The English "r" and "l"? No problem. The distinction between two types of "t" in Hindi? Detected. The difference between three closely spaced vowels in Swedish? Yes.

But between six and twelve months, this universal ability starts to fade. The infant's brain gradually "commits" to the sounds of its ambient language and loses the ability to discriminate sounds from other languages. A Japanese infant who easily distinguished English "r" from "l" at six months can no longer do so at ten months — because Japanese does not make that distinction [4]. In return, their discrimination of Japanese phonetic contrasts has sharpened. The brain has tuned itself. It has redirected its resources from "hear everything" to "native language specialist." Kuhl named this process "neural commitment to the native language."

Now here is where the story gets interesting. Kuhl wanted to know whether this process could be reversed. If you expose an American infant to Mandarin Chinese, could they preserve their sensitivity to Mandarin sounds? In 2003, she designed an experiment that became one of the decade's most important findings in language science [5]. She divided nine-month-old American infants into three groups. Group one: twelve twenty-five-minute sessions with a live Mandarin speaker who played with them and spoke Mandarin. Group two: exactly the same people, the same materials, but delivered via video. Group three: the same audio only.

The result was stunning. Group one — infants who interacted with a live person — preserved their ability to discriminate Mandarin sounds. It was as though the twelve-session experience had halted the "closing" of phonetic perception. But the video and audio groups? No effect. Zero. Their performance was identical to a control group that had never been exposed to Mandarin at all.

Consider what this means. The same information. The same sounds. The same words. But without the social presence of a real human being, the infant brain did not process the information. Television could not substitute for a teacher. The human brain requires something beyond acoustic data to learn language. It needs eye contact, pointing, turn-taking — that subtle social exchange that occurs between two living beings.

Universal Grammar: The Biggest Debate in the History of Linguistics

If children learn language merely by listening, there is a major problem. What they hear is not enough.

This idea was introduced by Noam Chomsky — the MIT linguist who is arguably the most controversial living thinker in the humanities — in the 1960s, and he called it "the poverty of the stimulus." The argument is simple: the language a child hears is full of incomplete sentences, errors, repetitions, and ambiguities. No one tells the child which sentences are incorrect — they only hear correct ones (positive evidence). And yet, all children around the world, at roughly the same age, at roughly the same speed, following roughly the same trajectory, arrive at the complex grammar of their native language.

Chomsky concluded that something innate must exist in the brain. A set of linguistic principles shared by all humans — something he called "Universal Grammar." In his view, the human brain is born with a "language acquisition device" that already contains the basic structures of language. Experience merely determines which "settings" get activated — like switches being turned on or off.

In 2002, Marc Hauser, Chomsky, and Tecumseh Fitch published a paper in Science that brought the debate to a peak [6]. Their claim was bold: the only linguistic feature that is uniquely human is "recursion" — the ability to produce unlimited sentences from finite elements by embedding structures within structures — as in "the man who saw the woman who owned the dog that..." Everything else about language — the sensorimotor system and the conceptual system — is shared between humans and other animals.

Steven Pinker and Ray Jackendoff responded in 2005, challenging virtually every part of this claim [7]. They argued that recursion is not the only uniquely human feature. Phonology, morphology, the case system — all are uniquely human and found in no other animal. Speech perception also shows qualitative differences between humans and animals.

But the more serious challenge came from another direction. Michael Tomasello — an evolutionary psychologist at the Max Planck Institute — proposed an entirely alternative theory: the "usage-based" approach. In Tomasello's view, children need no innate grammar. They learn language with two general cognitive abilities: "intention reading" — understanding what someone means by saying something — and "pattern finding" — discovering rules through categorization, analogy, and distributional analysis. Children first learn specific, limited linguistic structures — "give water," "don't want" — and only after sufficient repetition and experience extract the broader patterns.

Evans and Levinson dealt another blow in 2009. In a provocative paper in Behavioral and Brain Sciences, they argued that "the myth of language universals" has no basis in reality [8]. They showed that features long assumed to be universal — such as the noun-verb distinction, clause structure, or even personal pronouns — are absent in some languages. The actual linguistic diversity of the world is so vast that the claim of a shared universal grammar is called into question.

And Ewa Dąbrowska in 2015 systematically examined the three main pillars of Universal Grammar — universality, convergence, and poverty of the stimulus — and concluded that none hold up under scrutiny [9].

So is Universal Grammar dead? Not exactly. Chomsky and his followers have narrowed the theory — from a rich set of principles and parameters to a single computational operation called "Merge." The debate continues. But what has changed is this: a growing majority of researchers outside generative linguistics believe that the underlying abilities for language may be biological, but not necessarily "language-specific" — perhaps the same general cognitive abilities, when combined with rich social input, produce language.

The Girl Who Could Never Build a Sentence

If the brain is truly designed for language learning, is there a deadline? Is there a window that closes?

Eric Lenneberg — a German-born neurologist at MIT — published a book in 1967 called "Biological Foundations of Language" that became one of the most influential works in the history of linguistics. Lenneberg argued that language acquisition must occur between approximately age two and puberty — a window that coincides with the process of brain lateralization, the period during which the left hemisphere gradually assumes primary responsibility for language processing. After puberty, this flexibility disappears. His evidence? Children who suffer brain damage recover language. Adults do not.

Three years after Lenneberg's book was published, the most painful test of this hypothesis was discovered in Los Angeles.

November 4, 1970. A nearly blind woman walked into a social services office in the city of Arcadia with her thirteen-year-old daughter — by mistake, thinking it was the office for blindness benefits. The social worker immediately noticed something unusual. The girl weighed twenty-seven kilograms — the size of an eight-year-old. She could not walk properly. She had a "bunny walk" — holding her hands out in front and hopping in small steps. She was incontinent. And she produced almost no sound.

Her name was recorded in the scientific literature as "Genie."

Her father — Clark Wiley — had confined her to a locked room from around twenty months of age. During the day, she was strapped to a child's toilet seat. At night, she was imprisoned in a crib-like cage. Her father beat her for any sound she made. Instead of speaking, he growled and barked at her. Her mother and brother were forbidden from speaking to her or even near her. The windows were blacked out. Thirteen years and seven months. Clark killed himself before trial and left a note: "The world will never understand."

A team led by David Rigler from Children's Hospital Los Angeles and Victoria Fromkin from UCLA's linguistics department took on Genie's case. Susan Curtiss — Fromkin's doctoral student — was assigned to linguistic assessment and worked with Genie for years [10].

What could Genie learn? Vocabulary. Considerable vocabulary. Names of objects, people, places. She showed sophisticated mental categorization and strong nonverbal communication skills. What could she not learn? Grammar. Her sentences remained telegraphic: "Apple buy store." "Want milk." Word order, grammatical markers — past tense, plurals, pronouns — none were systematically acquired. The rate of her grammatical progress was far slower than normal and eventually stalled.

Dichotic listening tests — which determine which brain hemisphere processes language — revealed that Genie processed language primarily in her right hemisphere. The opposite of the normal pattern. As though her brain, deprived of linguistic input during the critical period, had never assigned the left hemisphere to language.

Genie's story grew more bitter after the research ended. She was moved to the Rigler household, then to a series of foster homes where she was again mistreated. Her language skills deteriorated rapidly. In 1978, her mother banned all scientific contact with Genie. The last reliable information indicates that Genie lives in a care facility in southern California.

Genie's case demonstrated something no ethical experiment ever could: vocabulary and grammar are separate systems. Vocabulary can be learned even after the critical period. Grammar — that deep structure that makes sentences meaningful — appears to depend on a time window beyond which it is not fully accessible.

Evidence from other sources confirms this same pattern. Anna Sharma and colleagues in 2002 studied children with cochlear implants [11]. One hundred and four congenitally deaf children. Those implanted before age three and a half showed normal cortical responses within six months. Those implanted after age seven, even after years of use, showed abnormal cortical responses. Their brains had not waited for sound — the auditory cortex had been "colonized" by vision and touch. Cross-modal reorganization had occurred and become irreversible.

And Elissa Newport in 1990 studied deaf individuals who had learned American Sign Language at different ages [12]. The result: performance declined linearly with age of first exposure. Those exposed to sign language from birth performed best. Late learners performed worst. And interestingly, Newport proposed the "less is more" hypothesis — perhaps the cognitive limitations of childhood — smaller working memory, more limited attention — are precisely what makes language learning easier. The child is forced to analyze small chunks. The adult, with greater processing capacity, tries to take in everything at once — and that very capacity becomes an obstacle.

The Children Who Built a Language from Nothing

If the human brain is truly designed to build language, it should be possible to see it in action. There should be somewhere that children, without any ready-made language, construct one. Such a place exists. Nicaragua.

Before the 1970s, deaf children in Nicaragua were largely isolated from one another. There was no deaf community. No common sign language existed. Each deaf child had a homemade gesture system with their family — simple and limited movements for basic communication. In 1977, a special education center was established in the San Judas neighborhood of Managua. By 1979, about a hundred deaf children had enrolled. Formal instruction was entirely "oral" — lipreading and spoken Spanish. No sign language was taught. And the method mostly failed.

But on the school buses, in the hallways, on the playground, something else was happening. The children began combining their home signs with each other. A spontaneous communication system took shape. Linguists would later call it "Lenguaje de Signos Nicaragüense" — Nicaraguan Sign Language.

Ann Senghas of Barnard College (Columbia) and Marie Coppola of the University of Connecticut documented this phenomenon over many years [13]. What they found challenged every theory of linguistics.

The first cohort — children who entered the school in the late 1970s and early 1980s — created a pidgin-like system. Their signs were holistic — a single, fluid movement describing an entire event. For instance, to say "the cat rolled down the hill," they would produce one continuous gesture simultaneously conveying "tumbling" and "going downward." There was no consistent grammatical structure.

The second cohort — children who entered from the mid-1980s onward, some as young as four — produced something different. They had been exposed to the first cohort's pidgin-like system. But what they produced was more systematic. "Spatial modulations" — the use of three-dimensional space around the body to specify "who did what to whom" — were used more than twice as often as by the first cohort. And crucially: they broke apart the first cohort's holistic gestures. "The cat rolled down the hill" was no longer a single movement — it became two distinct, sequential signs: one for "tumbling" and one for "going downward" [14].

This decomposition and recombination — building with bricks instead of sculpting with clay — is one of the fundamental properties of all human languages. And four-year-olds who had never seen a complete language invented it on their own.

Steven Pinker said of this case: "The Nicaraguan situation is unique in history. We were able to see how children — not adults — create language, and we were able to document it with precise scientific detail. And it is the only time we have truly witnessed a language being created from nothing."

Jennie Pyers and Ann Senghas in 2009 discovered something else [15]. First-cohort signers failed "false belief" tests — tests that measure theory of mind. They could not understand that another person might hold a belief different from reality. But second-cohort signers succeeded. Language was not merely a tool for communication. Language had shaped the ability to understand other minds.

Does Your Language Change How You Think?

Benjamin Lee Whorf — a fire insurance inspector who pursued linguistics as a hobby — proposed an idea in the 1940s that remains controversial to this day. Whorf claimed that the structure of language determines — or at least influences — how its speakers think. The strong version of this hypothesis — "linguistic determinism" — says you cannot think without language. Virtually no one accepts this version today. But the weak version — that language creates mental habits and shapes default thought patterns — is still alive and has evidence to support it.

Lera Boroditsky at Stanford University (now at UC San Diego) has conducted some of the most compelling experiments in this field. In 2001, she showed that Mandarin speakers — whose language uses vertical metaphors for time ("up month" means "previous month") — verified temporal relationships faster when primed with a vertical array. English speakers were faster with horizontal arrays [16].

But here is where the story gets complicated. Denny January and Edward Kako in 2007 attempted to replicate Boroditsky's original finding six times [17]. They failed six times. Jianyin Chen in the same year added four more unsuccessful attempts. Boroditsky herself acknowledged that the spatial priming paradigm had produced "unstable results," but argued that other paradigms show more stable cross-linguistic differences. This is a textbook example of how science works: a compelling finding is published, fails to replicate, and the field is forced to rewrite its claims more carefully.

Stronger evidence has come from color. Jonathan Winawer and colleagues in 2007 designed an experiment [18]. Russian has two basic terms for blue: "goluboy" for light blue and "siniy" for dark blue. Not two shades of one color — two separate colors, like green and blue in English. Twenty-six Russian speakers and twenty-four English speakers at MIT were tested. They had to identify the odd one out in triplets of blue squares. Russian speakers were faster when the grouping crossed the "goluboy/siniy" boundary. English speakers showed no such advantage. But — and this is key — when Russian speakers were given a simultaneous verbal interference task (counting random numbers aloud), their advantage disappeared. "Verbal interference" eliminated the effect. This means the influence of language on color perception requires online access to linguistic categories — when the language system is busy with something else, the effect vanishes.

And then there is the Pirahã affair. Daniel Everett — a field linguist who lived with the Pirahã tribe in the Amazon rainforest for over thirty years — made an explosive claim in 2005 [19]. He said the Pirahã language has no numbers of any kind — not even "one" and "two." No color terms. No embedded clauses — meaning no recursion, the very feature Chomsky had declared the sole uniquely human property of language. Everett argued these limitations are cultural, not biological — Pirahã culture is built on "immediacy of experience," and anything outside direct experience — exact numbers, fictional stories, distant history — is set aside.

The reaction from MIT was fierce. Everett was accused of "charlatanism." A letter was sent to Brazil's indigenous affairs agency that resulted in the revocation of Everett's field research permit. The journal Language devoted nearly half of its June 2009 issue to the controversy. The matter remains unresolved. But it demonstrated one thing: any claim about "language universals" must be tested against field data from the actual diversity of the world's seven thousand living languages [20].

The current consensus? Linguistic determinism is definitively rejected. The influence of language on thought — in its weak version — is broadly accepted, with the important caveat that effects are often "online" and require the language system to be actively engaged in the moment.

Why Adults Struggle to Learn Languages

So far we have seen how infants build language from nothing. Now the question flips: why do adults, with all their intelligence, motivation, and tools, find this so difficult?

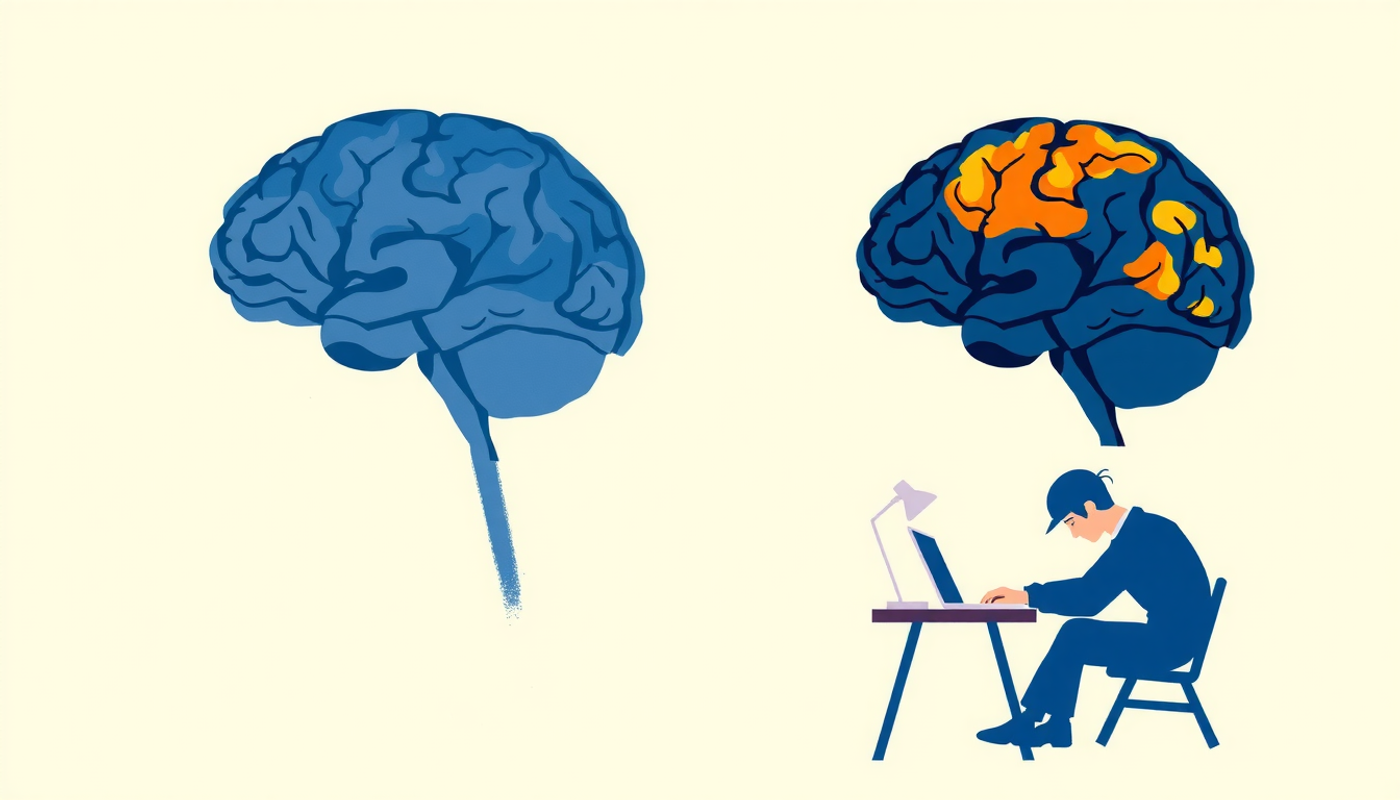

Michael Ullman at Georgetown University has proposed a model that provides one of the best explanations available: the declarative/procedural model [21]. The idea is simple but powerful. The brain has two primary memory systems. The "procedural" system — housed in the basal ganglia and Broca's area — manages automatic, unconscious operations: riding a bicycle, touch typing, and native-language grammar. The "declarative" system — housed in the hippocampus and medial temporal lobe — stores conscious, fact-based information: the capital of France, grandmother's birthday, and new vocabulary.

When a child learns their first language, grammar enters the procedural system directly. Automatic, unconscious, fast. An English-speaking child says "dogs" without pausing to think about the plural rule. But when an adult learns a second language, grammar initially enters the declarative system — like memorizing a formula. Conscious, slow, effortful. For every sentence, you must recall the rule and apply it. Only with extensive practice does grammatical processing gradually transfer to the procedural system and begin to resemble the brain's native-language pattern.

Robert DeKeyser, through his "skill acquisition" theory, explains the same trajectory from a different angle. Learning a second language, like learning any complex skill — driving, playing piano — has three stages: declarative (knowing the rule), procedural (applying the rule with effort), and automatic (applying without thinking). The critical variable is practice — massive, varied, and meaningful practice.

But is there truly a biological cutoff point? The largest study to address this question is the work of Joshua Hartshorne, Joshua Tenenbaum, and Steven Pinker in 2018 [22]. They designed an online grammar quiz called "Which English?" and made it go viral on Facebook. It was shared over 300,000 times. A total of 669,498 people — monolinguals and bilinguals from around the world — completed the 132-question English grammar test.

Using a sophisticated computational model, they disentangled three intertwined variables: current age, age of first exposure, and years of experience. The result: grammar-learning ability remains roughly constant until approximately 17.4 years of age — far later than previously thought. After that, decline begins. But the researchers themselves acknowledged they could not determine whether this decline is biological or social. Around ages 17 to 18, most people leave home, enter university or the workforce, and lose the immersive language environments of childhood.

Van der Slik and colleagues in 2022 reanalyzed the same data [23] and showed that the "sharp cutoff" at 17.4 years was a statistical artifact caused by pooling different learner types. When each group was analyzed separately, a continuous, gradual decline — not a sudden one — better fit the data for monolinguals and early bilinguals.

And there are adults who do succeed. David Birdsong in 1992 tested English speakers who had emigrated to France after puberty [24]. Some were indistinguishable from native French speakers on grammaticality judgment tests. Bongaerts and colleagues in 1997 even showed that some late learners of Dutch had pronunciation that native-speaker judges could not distinguish from native speech [25]. These exceptions are rare — but they exist, and any theory claiming an "absolute biological ceiling" must account for them.

The Memory That Holds Language Together

Learning a new word seems simple. You hear the word, you understand its meaning, done. But at the brain level, something complex is happening. The sound pattern of the new word must be held in short-term memory long enough for a stable long-term representation to form. And the brain's tool for this is a component of working memory called the "phonological loop."

Alan Baddeley, Susan Gathercole, and Costanza Papagno published a paper in 1998 making a bold argument: the phonological loop — that part of working memory that maintains speech-based information through subvocal rehearsal — actually evolved as a "language learning device" [26]. Their evidence came from three sources. First: neurological patients with selective damage to the phonological loop nearly completely lose the ability to learn new words. Second: in children, the ability to repeat "nonwords" — meaningless strings like "blonterstaping" — is the strongest predictor of vocabulary size. Third: when the phonological loop is experimentally disrupted (for instance, by forcing subjects to count aloud simultaneously), new word learning is impaired.

Elise Service in Finland demonstrated this directly in second language learning in 1992 [27]. Finnish children beginning to learn English were tested. Their ability to repeat Finnish-like nonwords at the start of the school year predicted English vocabulary and grammar scores at the end of the year — even after controlling for general intelligence. And a meta-analysis by Linck and colleagues in 2014, analyzing 79 samples (3,707 participants), confirmed a significant correlation of ρ = 0.255 between working memory capacity and second-language proficiency [28].

The message is simple but important: people with stronger working memory learn second languages more easily. Not because they are smarter — but because their brains are better at holding the sound patterns of unfamiliar words long enough for long-term representations to form.

The Bilingual Advantage Myth

If you have read any popular science magazine, you have probably heard this claim: bilinguals are smarter. They have better memory. Stronger attentional control. They even get Alzheimer's later. Ellen Bialystok at York University in Toronto spent over two decades collecting data to support these claims. In 1999, she showed that bilingual children outperformed monolinguals on tasks requiring high attentional control [29]. In 2007, examining 184 patients diagnosed with dementia, she showed that lifelong bilinguals received their diagnosis an average of 4.1 years later than monolinguals [30]. And in 2012, she published a wide-ranging review attributing bilingualism to the restructuring of cognitive and neural systems across the lifespan [31].

But then the storm came.

Kenneth Paap and Zachary Greenberg in 2013 tested a large, diverse sample of bilinguals and monolinguals on multiple executive function tasks: Simon, flanker, antisaccade, color-shape switching [32]. They found no significant bilingual advantage on any of them. They then reviewed 63 prior bilingual advantage studies on inhibitory control and concluded the evidence was "inconsistent" — positive results came primarily from studies with small samples.

Angela de Bruin and colleagues in 2015 delivered a devastating analysis of publication bias [33]. They tracked conference abstracts from 1999 to 2012 and compared which ones made it to published papers. Studies that confirmed a bilingual advantage were published 68 percent of the time. Studies that rejected it were published only 29 percent. The published literature was painting an exaggerated picture of reality.

And then the meta-analyses arrived. Lehtonen and colleagues in 2018 conducted the largest meta-analysis with 891 effect sizes from 152 studies [34]. Before correcting for publication bias, there was a very small advantage. After correction: nothing. Lowe and colleagues in 2021, with 1,194 effect sizes from over 23,000 children, conducted a similar meta-analysis [35]. The raw effect: g = 0.08. After bias correction: g = −0.04. Effectively zero.

Is there no advantage at all? The picture is more nuanced than that. The dementia delay claim still partially stands. Brini and colleagues in 2020 conducted a meta-analysis showing that bilinguals report Alzheimer's symptoms 4.7 years later [36]. But — and this "but" is important — prospective studies showed that bilingualism does not reduce the risk of developing dementia. It only delays the appearance of symptoms. The interpretation: bilingualism builds "cognitive reserve" — like having a backup engine that activates when the main one fails. The brain pathology is the same, but the brain compensates better.

The honest conclusion: the general cognitive advantage of bilingualism — that grand claim that "bilinguals are smarter" — is not supported by the best available evidence. But bilingualism is not without effect either. The bilingual brain is genuinely different — its structure changes, its control network becomes more active, and it may be more resilient against neural deterioration. One simply should not expect that learning Spanish will make you better at math.

The Man Who Had Only One Syllable

So far we have looked at language from the outside — through the lenses of linguistics and psychology. Now it is time to go inside the brain. And the story begins in 1861.

Louis Victor Leborgne was born in 1809. His father was a schoolteacher. He himself was a hat-form maker. Around age thirty, he lost the ability to speak and was admitted to the Bicêtre Hospital in Paris. His comprehension was intact — he understood everything said to him. He was alert. He communicated through hand gestures. When asked how many years he had been in the hospital, he opened and closed his hand four times and then added one finger — twenty-one years. Correct. But from his mouth came only one syllable: "tan." He usually repeated it twice. "Tan, tan." The hospital staff nicknamed him "Monsieur Tan."

In April 1861, a gangrenous leg infection transferred Leborgne to Paul Broca's surgical ward. Broca examined him. Six days later, Leborgne died. Broca performed the autopsy and brought the brain to the Société d'Anatomie de Paris the very next day. He found a visible lesion in the posterior portion of the left inferior frontal gyrus — a region that would come to be called "Broca's area." Broca went on to collect twenty-five additional cases, all with left hemisphere lesions, and established the principle of "left hemisphere dominance for language."

But 146 years later, Nina Dronkers and colleagues retrieved Leborgne's preserved brain from the Musée Dupuytren in Paris and scanned it with high-resolution MRI [37]. The results were striking. The lesion was far more extensive than Broca had reported. Massive subcortical damage — the insula, putamen, globus pallidus, head of the caudate nucleus — all destroyed. More importantly, the superior longitudinal fasciculus — the major white matter tract connecting posterior and anterior language regions — was completely obliterated. The left hemisphere had shrunk to up to 50 percent smaller than the right. The lesion that founded the science of neuropsychology was far more complex than its founder had realized.

Thirteen years after Broca, Carl Wernicke — a twenty-six-year-old German physician — discovered a different type of language impairment. He examined a patient who spoke fluently but whose speech was meaningless. The patient produced grammatically structured sentences, but with incorrect words substituted and virtually zero semantic content. His comprehension of others' speech was also impaired. His hearing was intact. Upon death, a lesion was found in the left posterior superior temporal gyrus — near the auditory cortex. Wernicke argued that this region stored "sound images" of words and that without it, language comprehension was impossible. He even predicted that if the connection between his area and Broca's area were damaged, a specific syndrome would arise — the patient would be able to understand and speak but unable to repeat words. This prediction was later confirmed.

The "Broca = production, Wernicke = comprehension" model dominated the neuroscience of language for over a century. But modern science has shown that this model is oversimplified.

Gregory Hickok and David Poeppel introduced the "dual-stream model" in 2007 [38] — analogous to the "what" and "where" pathways in vision. Language processing has two parallel streams. The ventral stream passes through the temporal lobe and maps speech sounds to meaning — "what did they say?" The dorsal stream runs from the temporal-parietal junction to frontal articulatory-premotor networks and maps speech sounds to motor representations for production — "how do I say it?" The ventral stream is largely bilateral, which explains why unilateral left-hemisphere damage rarely destroys comprehension entirely. The dorsal stream is strongly left-hemisphere dominant.

Evelina Fedorenko and Idan Blank at MIT took the next step in 2020, arguing that "Broca's area is not a natural kind" [39]. What is called "Broca's area" is not a coherent functional unit. It contains at least two functionally distinct subregions: one that responds only to language and one that responds to any cognitively demanding task. The core language network — based on over 1,400 brain scans — includes distributed regions across the left inferior frontal gyrus, left lateral temporal cortex, and angular gyrus. All respond with high selectivity to linguistic stimuli and do not respond to mathematics, music, logic, or social cognition [40].

Two Languages, One Brain — or Two?

If the brain has only one language network, what happens when someone knows two languages? Is the second language processed in the same location, or does the brain assign it a separate "room"?

In 1997, Kim, Relkin, Lee, and Hirsch used fMRI — functional magnetic resonance imaging — to ask this question directly [41]. Twelve subjects: six early bilinguals (who had learned both languages before age five) and six late bilinguals (who had learned their second language after puberty). They were asked to silently narrate the events of the previous day in each language. In Broca's area: late bilinguals showed spatially separated activations for their first and second languages. Early bilinguals showed overlapping activations. In Wernicke's area: no difference — both groups showed complete overlap.

But Perani and colleagues in 1998 counterattacked using PET — positron emission tomography [42]. They tested three groups: low-proficiency late-acquisition bilinguals, high-proficiency late-acquisition bilinguals, and high-proficiency early-acquisition bilinguals. The key result: cortical differences between the two languages that were observed in the low-proficiency group disappeared in both high-proficiency groups. The conclusion: "Attained proficiency is more important than age of acquisition." It was not about when you learned your second language — it was about how well you learned it. A bilingual who learned their second language after puberty but to native-like proficiency shows brain patterns similar to those of a native bilingual.

The Gene That Is Not "the Language Gene"

In 1990, a schoolteacher named Elizabeth Auger at Lionel Primary School in Brentford, west London, noticed that several children from one family had a peculiar problem. They could not coordinate their facial muscles to produce speech. Their faces sometimes appeared frozen. Their speech was full of pauses and unintelligible sounds. Auger realized the pattern extended across generations. The grandmother, four of her five children, and eleven of her twenty-three grandchildren were affected [43].

The KE family — as it became known in the scientific literature — became one of the most important case studies in the genetics of language. Vargha-Khadem and colleagues in 1995 compared affected and unaffected family members [44]. Affected members had severe orofacial dyspraxia — difficulty planning and executing voluntary movements of the lips, tongue, and face. Their problem was not limited to grammar. Nonverbal IQ was also lower in affected members, though still within the normal range. This finding refuted the "grammar gene" hypothesis proposed by linguist Myrna Gopnik.

In 2001, Lai and colleagues at Oxford finally identified the responsible gene: FOXP2 [45]. A single point mutation in the forkhead domain — the part of the protein that binds to DNA — was sufficient to cause the entire disorder. Media headlines proclaimed: "Language gene discovered." But that headline was wrong in almost every respect.

Why is calling FOXP2 "the language gene" misleading? Fisher and Scharff in 2009 provided the best answer [46]. First: FOXP2 is a transcription factor — its job is to regulate hundreds of other genes during brain development. It is an upstream regulator, not a gene "for" language. Second: it is expressed in the basal ganglia, cerebellum, cortex, and thalamus — motor circuits, not "language centers." Third: it is evolutionarily ancient and deeply conserved. In songbirds, FoxP2 is expressed in Area X of the basal ganglia (critical for vocal learning); knockdown disrupts song learning. In mice, Foxp2 knockout causes severe motor impairments and reduced ultrasonic vocalizations. It is even found in crocodiles.

Enard and colleagues in 2002 showed that the human version of FOXP2 differs from the chimpanzee version by only two amino acids [47] — a remarkably small change given 75 million years of evolutionary divergence. The pattern of nucleotide polymorphism strongly suggested positive selection during recent human evolution. And in 2007, Krause and colleagues showed that Neanderthals shared the same two human-specific amino acid changes [48] — meaning the positive selection occurred at least 300,000 to 400,000 years ago, in the common ancestor of modern humans and Neanderthals.

Language is not the product of one gene. It is the product of a network of hundreds of genes interacting with experience. FOXP2 was simply the first window that opened onto this network.

The Brain That Grows with Language Learning

Does language learning actually change the brain? Not just its function — its structure?

Mechelli and colleagues in 2004 provided the first direct evidence [49]. Using voxel-based morphometry, they compared the brains of bilinguals and monolinguals. Bilinguals had increased grey matter density in the left inferior parietal cortex — a region involved in vocabulary learning. And the degree of this change correlated with two factors: proficiency level in the second language and age of acquisition. The more proficient and the earlier the learning, the greater the density.

But perhaps the most compelling study came from Sweden. Johan Mårtensson and colleagues in 2012 examined young Swedish conscripts at the Swedish Armed Forces Interpreter Academy in Uppsala [2]. This academy runs one of the most intensive language training programs in the world. Selected soldiers — screened for linguistic aptitude — start from absolute zero in Arabic, Russian, or Dari and must reach functional proficiency within three months. They learn 300 to 500 new words per week. From morning to night, nothing but classes and study.

After just three months, structural MRI revealed measurable changes. The hippocampus — that seahorse-shaped structure serving as the brain's memory factory — had grown larger. Cortical thickness had increased in the left middle frontal gyrus, inferior frontal gyrus, and superior temporal gyrus. Soldiers who achieved higher proficiency showed greater hippocampal growth. Those who struggled more showed greater grey matter increases in the motor region — perhaps reflecting compensatory articulatory strategies.

And the changes were not limited to grey matter. Schlegel and colleagues in 2012 scanned Dartmouth students learning Mandarin over nine months with monthly DTI — diffusion tensor imaging [50]. They found increased fractional anisotropy and decreased radial diffusivity in frontal-lobe white matter tracts — indicative of increased myelination. Five white matter tracts showing learning-induced changes all terminated in the caudate nucleus.

And Huber and colleagues in 2023 used quantitative MRI to show that the frequency of "parent-infant conversational turns" at eighteen months — not total speech volume but interactive back-and-forth — predicted myelin density in the left arcuate fasciculus at age two [51]. Conversation — not one-directional sound — physically builds the language pathways in an infant's brain. Precisely the same message Patricia Kuhl's 2003 experiment had delivered, but this time at the level of white matter and myelin.

How the Brain Breaks Continuous Speech Apart

There is a fundamental computational problem the brain must solve every time someone speaks. In written language, spaces mark word boundaries. In natural speech, no such spaces exist. The acoustic signal is a continuous stream of sounds deeply interleaved with each other. Adjacent phonemes simultaneously influence each other's sounds — a phenomenon called coarticulation. The same phoneme "d" in "dark," "door," and "desk" produces different acoustic signals.

Anne-Lise Giraud and David Poeppel in 2012 proposed a model that explains how the brain solves this problem [52]. The answer: cortical oscillations at different temporal scales. Theta oscillations — at approximately 4 to 8 Hz — match the syllabic rate (each syllable lasts about 150 to 300 milliseconds) and segment speech into syllable-sized chunks. Gamma oscillations — 25 to 40 Hz — operate at the phonemic timescale (20 to 50 milliseconds) and extract finer-grained phonemic information. Delta oscillations — 1 to 3 Hz — track slower prosodic and intonational contours.

In other words: the brain does not search for pauses. It uses its own internal rhythms as a temporal scaffold and constructs a hierarchical, discrete neural code from a continuous signal.

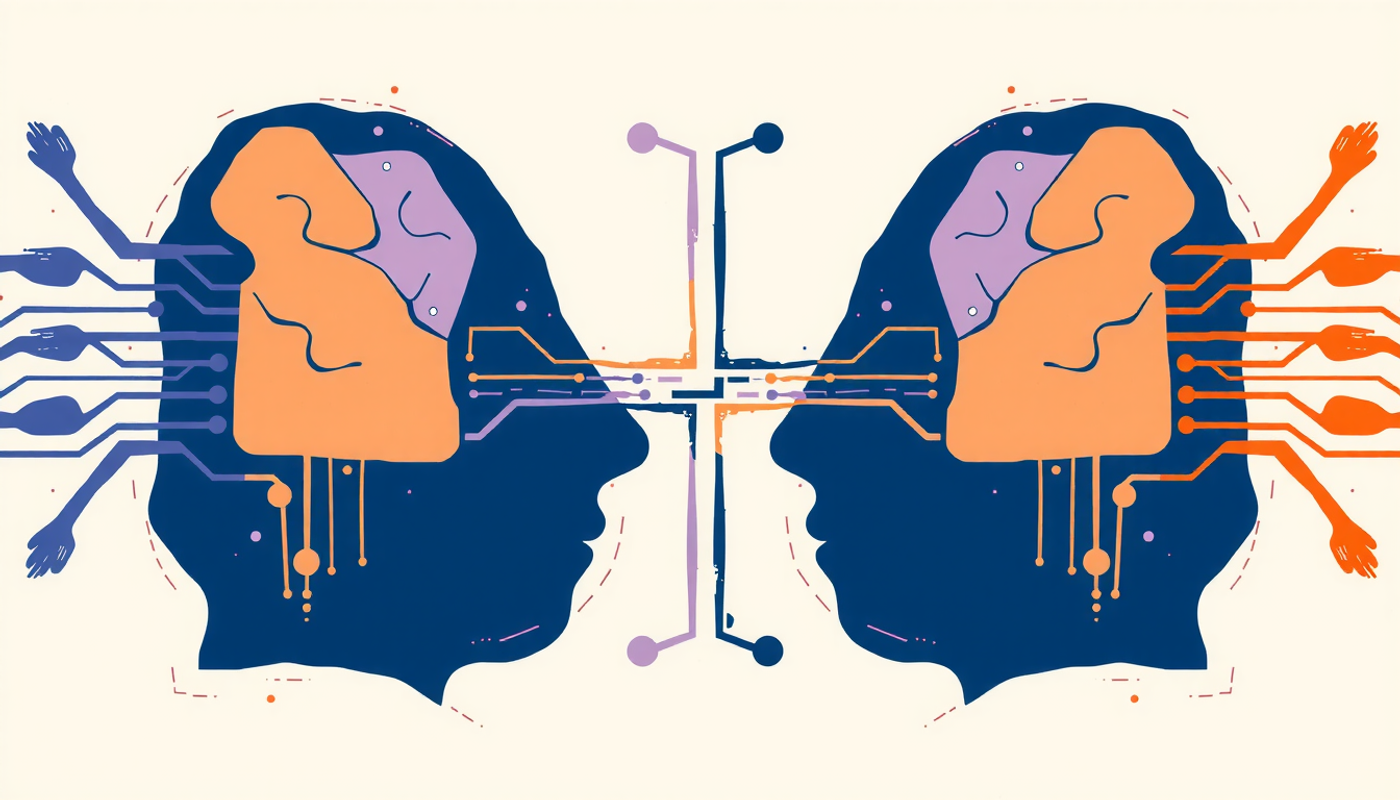

The Comparison No One Expected: Brains versus Artificial Intelligence

In recent years, something unexpected has happened. Large language models — systems like GPT and Claude — have become new tools for understanding the human brain. Not because they work like the brain, but because comparing their predictions to brain activity has revealed surprising insights.

Goldstein and colleagues in 2022 used electrocorticographic recordings — electrodes placed directly on the brain surface of epilepsy patients — and compared brain activity during podcast listening to the predictions of GPT-2 [53]. They found three shared computational principles: both the brain and the language model continuously predict the next word (before hearing it), compare predictions to actual input to compute "surprise," and use contextual embeddings for both tasks. Predictive brain signals were detectable hundreds of milliseconds before word onset.

Jamali and colleagues in 2024 took a bigger step. Using Neuropixels probes — ultra-fine electrodes that record the activity of individual neurons — in the left prefrontal cortex during sentence and story listening [54]. It was the first time semantic encoding at the single-neuron level had been observed during natural language comprehension. They found neurons selectively tuned to specific semantic domains — objects, actions, emotions — whose responses were dynamic, reflecting word meanings in context rather than as fixed memory representations.

But Fedorenko, Piantadosi, and Gibson in 2024 made an important reminder [55]: language and thought are separate things. The brain's language network does not activate during nonlinguistic tasks — mathematics, logic, social cognition, music. And aphasic patients — those who have lost language — retain their sophisticated cognitive abilities. Language is the primary tool for cultural communication, not the medium of thought.

What Actually Matters? Dopamine, Fear, and the "Ideal Self"

Cognitive science has shown that language learning is not merely a matter of brain mechanisms. The emotional state of the learner — motivation, anxiety, self-image — has a direct impact on brain performance.

Robert Gardner and Wallace Lambert in 1972 introduced a famous distinction between "integrative motivation" — the desire to genuinely connect with the second-language community — and "instrumental motivation" — practical goals like a better job or higher grades. Their research on English-speaking Canadians learning French showed that integrative motivation was a stronger predictor of success.

But Zoltán Dörnyei in 2009 rewrote this model [56]. His "L2 Motivational Self System" has three components: the "ideal L2 self" — the image you hold of yourself as a proficient speaker of the second language, for instance, visualizing yourself delivering a fluent presentation at an international conference. The "ought-to L2 self" — what others expect of you, like parental pressure or job requirements. And the "L2 learning experience" — attitudes toward the immediate learning environment. The strongest predictor of effort: the ideal self. The gap between "who I am" and "who I want to become" generates motivational energy.

On the opposite end, there is language anxiety. Horwitz, Horwitz, and Cope in 1986 showed that foreign language classroom anxiety is a distinct form of anxiety — not merely test anxiety or communication apprehension [57]. It has three components: fear of speaking in the second language, anxiety about being evaluated, and worry about negative judgment. The cognitive mechanism is clear: anxiety consumes working memory resources. The anxious learner's mind, instead of processing language, is occupied with self-monitoring, predicting failure, and assessing threat. Resources that should be devoted to phonetic and grammatical processing are spent on worry.

Does the Critical Period Really Exist — or Is It Just a Spectrum?

It is time for the most honest section of this article. All the evidence we have seen — Genie, cochlear implants, the 670,000-person study, nine-month-old infants — points in one direction: age matters. But how much it matters and why it matters remain contested.

One side of the debate — the traditional view — says a biological window exists. After puberty, the brain loses its neural plasticity, myelination is completed, and language circuits become "hardwired." Larsen and colleagues in 2023 proposed a framework showing that association cortices — including language areas — have later critical periods than sensory areas, coinciding with adolescence [58].

The other side says "critical period" is a vague, oversimplified concept. The evidence shows that different ages carry different sensitivities for different aspects of language. Phonetics has the earliest window — phonetic perception begins to change before age one. Grammar has a longer window — perhaps extending into late adolescence. Vocabulary has a very long window and may never truly "close." Adults like those studied by Birdsong and Bongaerts show that even native-like pronunciation after puberty is possible — rare, but possible.

And there is the social interpretation: perhaps what looks like a "critical period" is really a reflection of social changes in adolescence — leaving home, academic specialization, loss of immersive environments. Hartshorne himself acknowledged he could not separate biology from sociology.

The most likely answer? Both. There are probably real biological changes — the adolescent brain differs from the adult brain in synaptic plasticity. But social, cognitive, and motivational factors amplify or modulate those changes. The "critical period" is not a wall. It is more like a steep slope — the higher you climb, the harder it gets, but the path is not blocked.

Conclusion

The human brain is the only system known in nature that can build language. Not just learn it — build it. The deaf children of Nicaragua proved this. Without any complete linguistic input, without any adult model, without any formal instruction, they constructed grammar. Not just words — structure.

But this same brain has its own limitations. Windows open and — if not fully closed — narrow. The infant who at six months discriminates all the world's sounds hears only the sounds of their native language by twelve months. The child discovered at thirteen never fully acquired grammar. And the adult who begins a second language after eighteen must work far harder — not impossible, but more difficult.

Modern science reveals a far more complex picture of this process. Language is not two brain areas. It is a distributed network. There is no language gene. There is a network of hundreds of genes. The cognitive advantage of bilingualism is not a simple truth. It is an open and complex scientific debate. And artificial intelligence that produces language does not work like the human brain — but comparing the two has opened new windows into understanding both.

One thing is clear: the brain needs more than acoustic data to learn language. It needs humans. It needs eye contact, pointing, social exchange, that delicate dance between two living minds. Television cannot replace a mother. An app cannot replace a teacher. And no algorithm can replace real conversation. The brain is born social. And language, more than anything else, is a product of this sociality.

Frequently Asked Questions

How long does the critical period for language learning actually last?

Research suggests different language skills have different sensitive periods. Phonetic perception begins narrowing before age one. Grammar learning ability remains strong until approximately seventeen to eighteen years of age according to a study of nearly 670000 English speakers. Vocabulary learning appears to have no strict cutoff and can continue throughout life.

Can adults really achieve native-like fluency in a second language?

Yes but it is rare. Studies have documented adult learners who pass as native speakers even in pronunciation which is considered the hardest domain. Key factors include high language aptitude extensive immersion strong motivation and similarity between the first and second languages.

Is it true that bilingual people are smarter than monolinguals?

Large-scale meta-analyses published between 2018 and 2021 found no reliable evidence for a general bilingual cognitive advantage in executive function. However bilingualism may build cognitive reserve that delays the appearance of dementia symptoms by approximately four to five years even though it does not prevent the underlying brain disease.

Why can babies learn language from a live person but not from a screen?

A landmark 2003 experiment showed that nine-month-old American infants maintained Mandarin Chinese sound discrimination after twelve sessions with a live Mandarin speaker but showed zero learning from identical video or audio recordings. The brain appears to require social interaction cues such as eye contact turn-taking and shared attention to activate language learning mechanisms.

What does the FOXP2 gene actually do?

FOXP2 is a transcription factor that regulates hundreds of other genes during brain development. It is expressed in motor circuits including the basal ganglia and cerebellum not in classical language areas. Mutations cause a broad motor speech disorder not a specific grammar deficit. Calling it the language gene is misleading because language involves networks of hundreds of genes interacting with experience.