INTRODUCTION

You sit in a lecture hall. Your eyes track the slides. Your ears catch the professor's voice. Your hand scribbles notes. But here is the question nobody stops to ask. What is actually happening inside your head right now? How does light hitting your retina and sound vibrating your eardrum turn into something you can recall during an exam three weeks later? The answer involves one of the most extraordinary processes in biology. And honestly? Most students go through years of education without ever understanding it.

Here is what we know. Your brain is not a hard drive. It does not record experiences the way a camera records video. Instead, it transforms sensory signals through a cascade of electrical and chemical events that span molecules, synapses, circuits, and entire brain systems. Research on the forgetting curve shows that without reinforcement, most people lose 50 to 70 percent of new information within 24 hours [1]. But the flip side is just as remarkable. When you understand how learning actually works at the neural level, you can work with your brain instead of against it.

This article traces the complete journey of information through your brain. From the moment your senses pick up a signal, through the encoding and storage of memories, all the way to the moment you retrieve that information days or years later. Every claim is grounded in peer-reviewed neuroscience research, with particular attention to breakthroughs from 2024 and 2025 that have reshaped what scientists thought they knew about human learning.

How Does Information Enter Your Brain?

All learning starts with your senses. Every piece of knowledge you have ever acquired began as a physical signal from the outside world — light, sound, pressure, chemicals. Your sensory organs are biological transducers that convert physical energy into electrical impulses.

But your senses do not send raw data directly to your cortex. Almost everything passes through the thalamus, a structure deep in the center of your brain. Sherman and Guillery [2] established that only about 5 percent of the input to thalamic relay nuclei comes from the sensory periphery — the other 95 percent is modulatory feedback from cortex, brainstem, and thalamic reticular nucleus. A 2025 study used focused ultrasound to demonstrate a causal role for the anterior thalamus in shaping conscious visual experience [3].

The foundational work on visual cortex organization came from Hubel and Wiesel [4], who discovered that neurons respond to specific edge orientations — earning them the Nobel Prize. Retinal ganglion cell axons form the optic nerve and project to the lateral geniculate nucleus (LGN) of the thalamus, which contains magnocellular layers (motion, spatial information) and parvocellular layers (color, fine detail). From the LGN, signals travel to primary visual cortex. Felleman and Van Essen [5] mapped the hierarchy of over 30 distinct visual areas and 300+ connections. The dorsal stream processes spatial relationships and guides action, while the ventral stream identifies objects — the classic "where/how" versus "what" dissociation described by Goodale and Milner [6].

Your auditory system follows a parallel principle. Sound is transduced by inner hair cells in the cochlea and ascends through the cochlear nucleus, superior olivary complex, inferior colliculus, and medial geniculate nucleus before reaching primary auditory cortex. Kaas and Hackett [7] showed that primate auditory cortex contains multiple tonotopic fields organized in a core-belt-parabelt hierarchy.

Touch begins with four mechanoreceptor types in your skin. Mountcastle [8] established the columnar organization of somatosensory cortex, and Johansson and Flanagan [9] detailed how receptor signals integrate for precise hand manipulation. Olfaction uniquely bypasses the thalamus, projecting directly to piriform cortex and amygdala before secondarily reaching the mediodorsal thalamic nucleus [10].

The thalamus extends far beyond relay. Sherman [11] distinguished first-order nuclei (receiving peripheral input) from higher-order nuclei mediating cortical communication. Halassa and Kastner [12] established the thalamus as a hub for distributed cognitive control. Scott et al. [13] proposed that thalamocortical architectures support flexible cognition by dynamically routing information based on task demands.

What Happens When Your Senses Work Together?

In real life, you rarely experience a single sense in isolation. The classic demonstration is the McGurk effect — when visual lip movements for one syllable are paired with audio of another, people perceive a third syllable [14]. The brain actively merges sensory streams.

A 2025 study using stereoelectroencephalography in 42 patients found audiovisual speech processing in the superior temporal sulcus produces responses 40 percent faster than auditory input alone [15]. Stein and Stanford [16] outlined three rules governing multisensory integration: the spatial rule, the temporal rule, and the inverse effectiveness principle. Stein, Stanford, and Rowland [17] traced how these principles develop through experience-dependent maturation of individual neurons.

The brain uses Bayesian causal inference to combine senses. Körding et al. [18] demonstrated this computationally, and Shams and Beierholm [19] formalized the framework. Rohe and Noppeney [20] showed using fMRI that cortical hierarchies implement Bayesian integration, with parietal cortex weighting signals by reliability. Wallace and Stevenson [21] identified the temporal binding window within which cross-modal stimuli are bound together.

Cross-modal plasticity demonstrates dramatic sensory reorganization. In congenitally blind individuals, visual cortex is repurposed for Braille reading and auditory processing [22]. Park and Fine [23] proposed that cross-modal plasticity and ordinary skill learning share fundamental mechanisms. A 2025 paper in Trends in Cognitive Sciences challenged predictive coding orthodoxy, showing that cortical feedback enhances rather than suppresses predicted representations — the BELIEF framework [24].

Why Does Attention Decide What You Remember?

You can stare at a textbook for an hour and remember nothing. The difference is attention. The modern understanding began with Posner and Petersen [25], who proposed interacting alerting, orienting, and executive networks. Corbetta and Shulman [26] refined this into two frontoparietal systems — the dorsal attention network for voluntary focus and the ventral attention network for detecting unexpected stimuli.

At the cellular level, Desimone and Duncan [27] proposed the biased competition model — multiple stimuli compete for neural representation, and attention biases competition toward task-relevant objects. Moran and Desimone [28] provided the first neural evidence. Buschman and Miller [29] showed that top-down signals originate in prefrontal cortex, while bottom-up signals flow forward from sensory areas.

Alpha oscillations (8–13 Hz) gate attention. Klimesch [30] argued they control access to stored information, and Jensen and Mazaheri [31] formalized the gating-by-inhibition hypothesis. A 2024 PNAS study revealed precise spatial dissociation in alpha synchronization patterns [32]. Fries [33] proposed the broader communication-through-coherence framework. Lavie [34] introduced perceptual load theory, explaining when distractors interfere and when they do not. Chun, Golomb, and Turk-Browne [35] expanded this to a taxonomy of external and internal attention.

The neurotransmitter acetylcholine is the chemical backbone of attentional encoding. Lohani et al. [36] showed that basal forebrain cholinergic projections have far more topographic specificity than previously assumed, switching cortex into an encoding mode.

A methodological note is important here. Much of what we know about human attention networks comes from fMRI. But as Logothetis [37] cautioned, fMRI measures hemodynamic responses with a temporal resolution of approximately 5 to 6 seconds, while attentional selection operates on millisecond timescales. And Poldrack [38] demonstrated the reverse inference problem — inferring a cognitive process from activation of a particular brain region is not deductively valid, since most regions participate in multiple processes. These limitations mean that fMRI provides a spatially detailed but temporally blurred and inferentially constrained picture of attention.

How Does Your Brain Encode New Memories?

Working memory is your brain's mental whiteboard. It holds roughly 4 items [39] in an active state for seconds to minutes. Baddeley [40] introduced the episodic buffer joining the phonological loop, visuospatial sketchpad, and central executive. In a detailed 2012 review [41], he reflected on decades of debate about how these components interact.

Three competing models frame working memory differently. Baddeley's multicomponent model posits separate modality-specific stores. Cowan's embedded-processes model [39] reconceptualizes working memory as the activated portion of long-term memory, with a capacity-limited focus of attention embedded within it. Oberauer [42] extends this with three levels — activated long-term memory, a region of direct access, and a single-item focus of attention. Engle [43] argued that working memory capacity fundamentally reflects executive attention control rather than storage capacity. These models make different predictions about switching costs, binding phenomena, and capacity limits — and the debate remains active.

A 2025 study by Hallenbeck et al. [44] used TMS to show that prefrontal cortex controls resource allocation in working memory rather than storing information itself. Complementary work in Science Advances [45] decoded working memory representations directly from visual cortex.

For information to persist, it must be encoded by the hippocampus. The foundational case is patient H.M. — Scoville and Milner [46] showed that bilateral hippocampal removal produces devastating amnesia for new events. Squire [47] later synthesized 40 years of research on the medial temporal lobe memory system. Davachi [48] showed that perirhinal cortex processes "what" while parahippocampal cortex processes "where."

Three cell types scaffold new memories. O'Keefe and Dostrovsky [49] discovered place cells. Hafting et al. [50] discovered grid cells in the entorhinal cortex — earning the Nobel Prize in 2014. Theta oscillations organize encoding, with Buzsáki [51] establishing their role and Hasselmo et al. [52] proposing that encoding occurs at the theta trough while retrieval occurs at the peak. The dentate gyrus performs pattern separation — Yassa and Stark [53] reviewed the evidence, and Leutgeb et al. [54] demonstrated it directly. But measuring pattern separation in humans is challenging — the behavioral Mnemonic Similarity Task [55] taxes pattern separation demands but cannot isolate it from other processes like encoding quality and retrieval decisions.

Yates et al. [56] made a stunning 2025 discovery — using fMRI in awake one-year-olds (N=93), they identified a hippocampal subsequent memory effect, overturning the assumption that infantile amnesia results from immature encoding. A 2025 PNAS study [57] showed pattern separation and pattern completion mature at different rates in childhood.

Encoding quality depends on processing depth. Craik and Lockhart [58] showed semantic processing produces stronger traces. Paller and Wagner [59] established the subsequent memory paradigm, and Kim [60] meta-analyzed 74 fMRI studies identifying five regions predicting encoding success. Vaz et al. [61] found that hippocampal ripples during encoding predict memory clustering.

What Happens at the Synapse When You Learn Something?

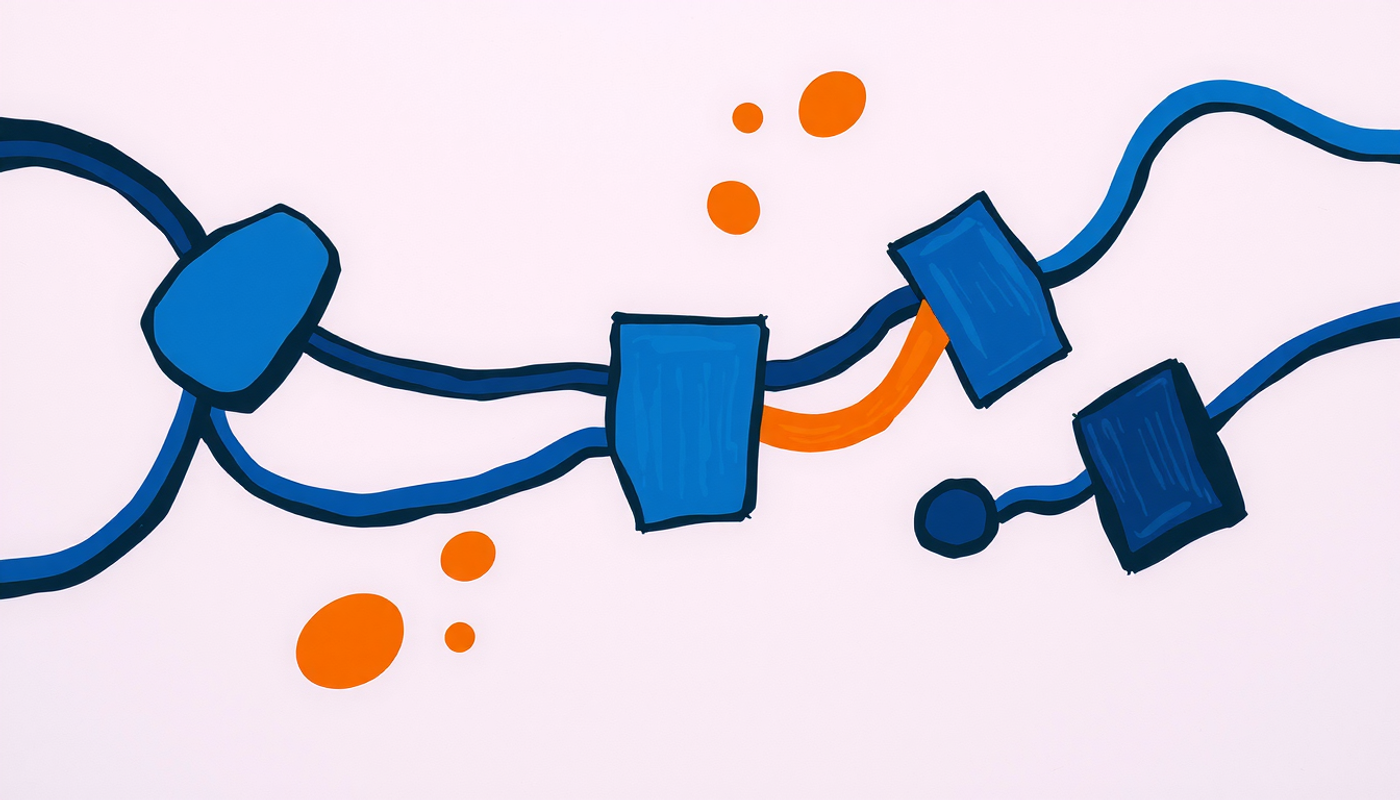

Hebb [62] proposed that neurons firing together strengthen their connections. Bliss and Lømo [63] demonstrated long-term potentiation experimentally. Collingridge et al. [64] showed blocking NMDA receptors prevents LTP induction. Morris et al. [65] demonstrated the behavioral importance — NMDA blockade impairs spatial learning.

The NMDA receptor is a coincidence detector. Lisman, Yasuda, and Raghavachari [66] reviewed how calcium dynamics determine plasticity direction — large rapid influx drives LTP via CaMKII, while moderate prolonged influx drives LTD via calcineurin. Bhatt et al. [67] revealed CaMKII serves a structural role through liquid-liquid phase separation. Malenka and Bear [68] cataloged the many forms of synaptic plasticity.

LTP expression involves AMPA receptor trafficking. Hayashi et al. [69] showed LTP drives GluR1-containing AMPARs into synapses. Huganir and Nicoll [70] reviewed 25 years of AMPAR research. Matsuzaki et al. [71] showed LTP causes individual dendritic spines to enlarge dramatically. Citri and Malenka [72] provided a broad overview. Nabavi et al. [73] achieved the striking demonstration of engineering a memory — inactivating it with LTD and reactivating it with LTP at the same synapse.

For lasting memories, gene expression is required. Silva et al. [74] reviewed CREB's central role. Kandel [75] synthesized decades of work in his Nobel lecture. BDNF is essential for LTP maintenance beyond 3 hours [76]. Arc/Arg3.1 serves dual roles in receptor trafficking and structural plasticity [77]. The synaptic tagging and capture hypothesis from Frey and Morris [78, 79] explains how weak stimulation creates a molecular tag that captures proteins triggered by strong stimulation elsewhere. Takeuchi, Duszkiewicz, and Morris [80] updated the synaptic plasticity and memory hypothesis, and Josselyn and Frankland [81] reviewed memory allocation mechanisms.

How Do Memories Become Permanent?

Consolidation operates at two scales. Squire and Alvarez [82] proposed the standard model — hippocampus gradually transfers memories to neocortex. Nadel and Moscovitch [83] challenged this with multiple trace theory — episodic memories remain permanently hippocampus-dependent. Dudai [84] distinguished synaptic from systems consolidation. Frankland and Bontempi [85] reviewed how remote memories depend increasingly on prefrontal cortex.

Three competing frameworks generate different predictions. Standard consolidation predicts hippocampal independence for all declarative memories. Multiple trace theory predicts permanent hippocampal dependence for vivid episodic details. And schema theory offers a critical modification — Tse et al. [86] showed in Science that when new information fits pre-existing knowledge schemas, consolidation accelerates dramatically. Van Kesteren et al. [87] proposed the SLIMM framework, arguing that medial prefrontal cortex detects congruency with existing schemas and facilitates rapid cortical encoding. McClelland later showed through simulations that schema-consistent information can be rapidly integrated without catastrophic interference — only schema-inconsistent information requires slow interleaving. This reconciles schema findings with the complementary learning systems theory [88, 89].

Sleep is the engine of consolidation. Stickgold [90] established that sleep-dependent consolidation is an active biological process. Marshall et al. [91] showed boosting slow oscillations enhances memory. Girardeau et al. [92] proved sharp-wave ripples are necessary — suppressing them impairs spatial memory. Yang et al. [93] discovered that waking ripples tag experiences for subsequent sleep replay. Shin and Jadhav [94] found prefrontal cortex generates independent ripples that suppress hippocampal activity, providing top-down editorial control.

Diekelmann and Born [95] synthesized the dual-process model — NREM consolidates declarative memories while REM benefits procedural and emotional memories. Rasch and Born [96] provided the definitive review. Tononi and Cirelli [97] proposed that sleep globally downscales synaptic strength, and de Vivo et al. [98] provided ultrastructural evidence showing synaptic interfaces are larger after wake than sleep. Buzsáki [99] published an extensive review of sharp-wave ripples as cognitive biomarkers.

Wilson and McNaughton [100] first demonstrated hippocampal replay during sleep. Rasch et al. [101] showed odor cues during slow-wave sleep enhance consolidation — the foundational targeted memory reactivation study. Oudiette and Paller [102] reviewed TMR evidence, and Hu et al. [103] meta-analyzed 91 experiments confirming a reliable effect (Hedges' g = 0.29) specific to NREM sleep. A 2025 review in Nature Reviews Neuroscience [104] synthesized oscillatory, neuromodulatory, and synaptic remodeling mechanisms into a unified consolidation framework.

Where Are Memories Actually Stored?

Karl Lashley spent decades searching for the engram. Josselyn, Köhler, and Frankland [105] reviewed the modern search. Liu, Ramirez, and Tonegawa [106] achieved the first optogenetic engram reactivation. Ramirez et al. [107] created artificial false memories. Han et al. [108] showed CREB overexpression makes neurons 3 times more likely to join an engram. Tonegawa et al. [109] declared engram cells have come of age. Josselyn and Tonegawa [110] published the definitive review on memory engrams.

Tomé et al. [111] showed engram composition changes within hours of learning. Cai et al. [112] demonstrated distinct memories encoded close in time share overlapping ensembles. Ryan et al. [113] showed engram cells retain memory even under retrograde amnesia — the trace exists but cannot be naturally accessed.

It is worth noting a significant translational limitation. Optogenetic engram manipulation requires genetic modification of neurons to express light-sensitive opsins, cell-type-specific promoters requiring transgenic approaches, and implanted fiber-optic devices — all infeasible in humans. Willems and Henke [114] used 7T MRI to image human engram-like representations non-invasively, and Lüscher et al. [115] published a 2025 roadmap for translating optogenetics toward human applications. But the gap between mouse engram experiments and human memory remains substantial.

Eichenbaum [116] described the cortical-hippocampal system for declarative memory. Lambon Ralph et al. [117] described the hub-and-spoke architecture for semantic memory. For long-term storage, Day and Sweatt [118] and Miller and Sweatt [119] established that DNA methylation regulates memory formation. Alexander et al. [120] showed perineuronal nets in different brain regions support different memory types.

How Do You Retrieve a Memory?

Retrieval is not playback but active reconstruction. Schacter and Addis [121] proposed the constructive episodic simulation hypothesis — the same machinery used for remembering is used for imagining the future. Tulving and Thomson [122] established encoding specificity. Yonelinas [123] distinguished hippocampal recollection from perirhinal familiarity. Rugg and Vilberg [124] reviewed retrieval brain networks.

Nader, Schafe, and LeDoux [125] overturned the assumption that consolidated memories are permanently stable — reactivation returns memories to a labile state (reconsolidation). Schwabe et al. [126] showed post-retrieval stress disrupts hippocampal reinstatement.

The testing effect is one of the most replicable findings in learning science. Roediger and Karpicke [127] demonstrated tested groups recall significantly more than restudied groups. Karpicke and Roediger [128] showed repeated retrieval is critical. Karpicke and Blunt [129] demonstrated retrieval practice produces more learning than concept mapping. Rowland [130] meta-analyzed hundreds of studies. Carpenter [131] showed testing enhances transfer. Chan et al. [132] found the testing effect magnitude is independent of retrieval performance — the attempt, not success, matters. Wimber et al. [133] showed retrieval induces adaptive forgetting through cortical pattern suppression.

But why does testing work? Four competing accounts exist. Bjork and Bjork [134] proposed the New Theory of Disuse — distinguishing storage strength from retrieval strength, with effortful retrieval producing the largest gains. Karpicke [135] reviewed elaborative retrieval evidence. Pyc and Rawson [136] proposed the mediator effectiveness hypothesis. And transfer-appropriate processing explains format-matching effects. Roediger and Butler [137] concluded no single explanation accounts for all findings — current evidence favors a multi-mechanism account.

Why Do We Forget? And Is Forgetting Actually Useful?

Forgetting is not passive decay but an active, adaptive process. Ebbinghaus's 1885 forgetting curve was replicated by Murre and Dros [1]. Wixted [138] provided a neuroscience perspective.

Rac1 is a central molecular effector of active forgetting [139]. Berry et al. [140] showed dopamine is required for both learning and forgetting. Awasthi et al. [141] identified synaptotagmin-3 as the molecular sensor for forgetting — mice lacking it showed complete absence of natural memory decay. Akers et al. [142] showed hippocampal neurogenesis promotes forgetting by integrating new neurons into existing circuits. Richards and Frankland [143] proposed that memory persistence and transience are both adaptive — forgetting generalizes experience to promote flexible decision-making.

Schacter [144] established that memory's apparent flaws are byproducts of adaptive features. Anderson, Bjork, and Bjork [145] discovered retrieval-induced forgetting. Anderson and Green [146] demonstrated motivated suppression. Wimber et al. [133] showed retrieval induces adaptive forgetting through cortical pattern suppression.

Why Does Spacing Your Study Sessions Work So Well?

The spacing effect is one of the most replicated results in learning science. Cepeda et al. [147] synthesized 254 studies confirming it. Dunlosky et al. [148] rated distributed practice and practice testing as the two most effective learning techniques. Kukushkin et al. (2024) found the spacing effect exists even in non-neural human cells — CREB-dependent responses were stronger with spaced stimulation. At the systems level, Zou et al. (2025) showed spaced learning increases ventromedial prefrontal cortex representational similarity.

Kornell and Bjork [149] demonstrated interleaving improves inductive learning. Bjork and Bjork [134] articulated the desirable difficulties framework — conditions that make learning feel harder produce stronger long-term retention. Roediger and Butler [137] synthesized the evidence for retrieval practice. The subjective feeling of fluency during study is a poor predictor of actual learning. If it feels too easy, your hippocampus is probably not working hard enough.

How Do Emotions and Motivation Shape What You Remember?

McGaugh [150] published the definitive review on amygdala modulation of consolidation through noradrenergic mechanisms. Phelps [151] reviewed amygdala-hippocampus interactions. Dolcos, LaBar, and Cabeza [152] showed greater amygdala-hippocampal interaction predicts better emotional memory. LaBar and Cabeza [153] provided the cognitive neuroscience framework for emotional memory.

Roozendaal, McEwen, and Chattarji [154] reviewed stress effects on memory. Joëls, Fernandez, and Roozendaal [155] showed timing is critical — stress enhances encoding but impairs retrieval.

Gruber, Gelman, and Ranganath [156] showed curiosity activates the VTA-hippocampal dopaminergic loop, enhancing encoding for targeted and unrelated material. Adcock et al. [157] showed reward-motivated mesolimbic activation precedes memory formation. Schultz, Dayan, and Montague [158] established that dopamine neurons encode reward prediction errors.

Can the Adult Brain Still Change?

Pascual-Leone et al. [159] reviewed evidence for lifelong cortical plasticity. Draganski et al. [160] showed juggling training produces measurable gray matter increases. The adult neurogenesis debate has been neuroscience's most contentious. Boldrini et al. [161] reported persistence throughout aging. Sorrells et al. [162] found sharp decline after childhood.

Why opposite results? The discrepancy traces to five methodological differences. Postmortem interval: DCX protein degrades rapidly, and Sorrells used tissue with PMIs up to 48 hours versus Boldrini's 26-hour limit. Tissue fixation: overfixation destroys DCX immunoreactivity. Antibody specificity issues. Sample characteristics: Sorrells included chronic epilepsy patients known to alter neurogenesis. And quantification methods: Boldrini used stereological 3D counting while Sorrells counted from selected sections. Kempermann et al. [163] published a consensus paper arguing multiple independent evidence lines support persistent neurogenesis. Dumitru et al. [164] provided the strongest evidence in 2025 using single-nucleus RNA sequencing. Zhou et al. [165] found human-specific gene expression but convergent biological processes across species.

Maguire et al. [166] showed London taxi drivers have larger posterior hippocampi. Hensch [167] described molecular mechanisms of critical period plasticity. Pizzorusso et al. [168] demonstrated dissolving perineuronal nets restores adult visual cortex plasticity. Bavelier et al. [169] reviewed removing brakes on adult plasticity. McKenzie et al. [170] showed motor learning requires active central myelination. Scholz et al. [171] showed juggling training changes white matter architecture.

Turrigiano et al. [172] discovered homeostatic synaptic scaling — a negative-feedback mechanism that maintains firing rates. Turrigiano [173] reviewed the self-tuning neuron. Takesian and Hensch [174] reviewed plasticity/stability balance across development. Larsen et al. [175] proposed that critical periods cascade along a sensorimotor-to-association axis.

What Makes Some People Learn Better Than Others?

Genetics modulate learning capacity. Egan et al. [176] showed the BDNF Val66Met polymorphism affects activity-dependent secretion. Chen et al. [177] demonstrated its effects on anxiety and hippocampal function. Papassotiropoulos et al. [178] linked the KIBRA allele to episodic memory. Egan et al. [179] showed COMT Val158Met modulates prefrontal dopamine. Caselli et al. [180] demonstrated APOE epsilon-4 effects on age-related memory decline. Savage et al. [181] and Davies et al. [182] identified genetic loci influencing cognitive function through massive GWAS. Lee et al. [183] found polygenic scores for educational attainment — meaningful but far from deterministic.

Age changes the equation. Gogtay et al. [184] showed prefrontal cortex does not fully mature until the mid-twenties using dynamic cortical mapping (N=13, ages 4–21, longitudinal MRI). Raz et al. [185] documented hippocampal volume decline with age. Stern [186] formalized the cognitive reserve concept. Park and Reuter-Lorenz [187] proposed the neurocognitive scaffolding theory of aging. Hedden and Gabrieli [188] reviewed aging effects — episodic memory and processing speed decline while vocabulary remains stable.

Sleep quality is critical. Meta-analytic evidence [189] shows sleep deprivation before learning produces large impairments (effect size 0.64). Havekes et al. [190] demonstrated that just 5 hours of sleep deprivation causes dendritic spine loss in CA1 through the cAMP-PDE4A5 pathway. Walker and Stickgold [191] reviewed sleep-plasticity interactions.

Exercise powerfully enhances learning. Erickson et al. [192] showed 12 months of aerobic exercise increased hippocampal volume by 2 percent. Hillman, Erickson, and Kramer [193] established exercise benefits across the lifespan. Szuhany et al. [194] meta-analyzed exercise effects on BDNF. Wrann et al. [195] identified the irisin/FNDC5 pathway through which exercise promotes hippocampal neurogenesis. Voss et al. [196] showed exercise improves white matter integrity. Chaddock et al. [197] demonstrated aerobic fitness predicts hippocampal volume in children.

Nutrition affects molecular learning machinery directly. Dyall [198] reviewed omega-3 effects on brain function. Cryan et al. [199] published the definitive review on the microbiota-gut-brain axis. Valls-Pedret et al. [200] showed in a randomized clinical trial that Mediterranean diet supplementation improves cognitive function. Gomez-Pinilla [201] reviewed brain foods in Nature Reviews Neuroscience. Kolb and Gibb [202] reviewed brain plasticity and behavior across development.

CONCLUSION

The journey of information through your brain — from photon hitting retina to memory retrieved years later — is one of biology's most extraordinary stories. Light becomes electrical signal becomes thalamic relay becomes cortical representation becomes hippocampal binding becomes synaptic strengthening becomes protein synthesis becomes engram cell becomes cortical memory trace becomes reconstructed retrieval. At every stage, the process is active, selective, and modifiable.

Several integrating principles emerge. Learning is fundamentally active. The brain preferentially stores information that it attends to, processes deeply, and retrieves effortfully. Forgetting is not failure but function — active molecular mechanisms clear irrelevant traces to maintain efficient memory search. Consolidation is not a single event but ongoing reorganization, with sleep providing the privileged state for hippocampal-cortical dialogue. And the molecular and systems levels are inextricable — the same CREB signaling that drives synaptic consolidation governs engram allocation; the same theta oscillations that organize encoding orchestrate sleep consolidation.

The 2024 to 2025 period has been remarkable. The demonstration that human infants encode hippocampal memories by age one [56]. The discovery that myelination actively restricts plasticity. Evidence that the spacing effect operates at fundamental cellular signaling levels. New challenges to predictive coding orthodoxy [24]. Confirmation of adult hippocampal neurogenesis through single-nucleus transcriptomics [164]. Cross-species analysis revealing human-specific gene expression in neurogenesis [165]. These findings collectively demand revision of textbook accounts.

Frequently Asked Questions

Is the brain a muscle that gets stronger with use?

The brain is not a muscle — it is neural tissue made of neurons and glial cells. But the analogy has some truth. Repeated mental effort strengthens synaptic connections through long-term potentiation and structural spine remodeling, a process called neuroplasticity. Regular cognitive engagement builds stronger and more efficient neural networks over time.

Why do we forget things we just learned?

Forgetting is partly an active process driven by molecular mechanisms like Rac1-dependent actin remodeling and AMPA receptor removal from synapses. Without reinforcement, newly encoded synaptic changes are gradually reversed. Spaced repetition and retrieval practice counteract this by re-strengthening traces at optimal intervals before they decay.

Does sleeping after studying actually help memory?

Yes. During non-rapid eye movement sleep, the brain replays newly encoded memories through coordinated slow oscillations, sleep spindles, and hippocampal sharp-wave ripples. This triple coupling drives transfer of memories from the hippocampus to the neocortex for long-term storage. Sleep deprivation significantly impairs both encoding and consolidation.

Can adults learn as effectively as children?

Adults retain significant neuroplasticity but face biological constraints — including myelination, perineuronal nets, and mature excitatory-inhibitory balance — that collectively restrict large-scale cortical reorganization. Adults compensate with stronger executive function, prior knowledge scaffolding, and strategic study techniques. Learning remains possible throughout life but optimal strategies differ.

Why does testing yourself work better than rereading?

Retrieval practice forces the brain to reconstruct memory traces from partial cues, strengthening hippocampal pattern-completion circuits and enhancing the distinctiveness of neural representations. Each retrieval attempt engages anterior hippocampus and prefrontal cortex, producing lasting synaptic modifications that passive rereading does not trigger.